Unity 베스트 프랙티스

이 페이지의 새 소식

기술 전자책

샘플 프로젝트: 젬 헌터 매치

2D

그래픽스 및 렌더링

DevOps

Unity C# 프로그래밍

- Visual Studio 2019를 사용하여 Unity에서 프로그래밍 워크플로 속도를 높이는 10가지 방법

- Unity의 C# 스크립팅에 대한 베스트 프랙티스 포맷 구성

- Unity의 C# 스크립팅에 대한 명명 및 코드 스타일 팁

- 관찰자 패턴을 사용하여 모듈식 및 유지 가능한 코드 만들기

- 상태 프로그래밍 패턴을 사용하여 모듈식, 유연한 코드 베이스 개발

- Unity에서 C# 스크립트의 성능을 높이기 위해 오브젝트 풀링 사용

- MVC 및 MVP 프로그래밍 패턴을 사용하여 모듈식 코드 베이스 구축

- 런타임에서 객체 생성을 위한 팩토리 패턴 사용 방법

- 유연하고 확장 가능한 게임 시스템을 위한 커맨드 패턴 사용 방법

- Unity 2022 LTS 이상에서 새로운 AI 내비게이션 패키지 사용 가이드

- Unity ScriptableObjects 데모 시작하기

- 옵저버 패턴과 함께 ScriptableObject 기반 이벤트 사용하기

- Unity 프로젝트에서 ScriptableObject 기반 열거형 사용하기

- ScriptableObjects로 Unity에서 게임 데이터와 로직 분리하기

UI(사용자 인터페이스)

성능 최적화

모바일 게임 성능 최적화: 그래픽 및 자산에 대한 전문가 팁

모바일 게임 성능 최적화: 물리, UI 및 오디오 설정에 대한 전문가 팁

모바일 게임 성능 최적화: Unity의 최고 엔지니어들로부터 프로파일링, 메모리 및 코드 아키텍처에 대한 팁

- Unity 2021 LTS에서의 프로파일링: 무엇, 언제, 어떻게

- Profile Analyzer로 게임 최적화하는 방법

- Unity에서 성능 최적화를 위한 팁: 프로그래밍 및 코드 아키텍처

아트 및 게임 디자인

산업 분야

Unity Gaming Services

테스트, 디버깅 및 품질 보증

새 전자책

Unity 6의 고해상도 렌더 파이프라인의 조명 및 환경

이 전자책을 다운로드하여 Unity 6 및 6.1의 HDRP에 포함된 모든 기능에 대해 알아보세요.

고급 Unity 개발자를 위한 UI 툴킷 (Unity 6 에디션)

UI 툴킷 기능에 중점을 둔 이 주요 새로운 가이드를 읽어보세요. 데이터 바인딩, 지역화, 사용자 정의 컨트롤 등 Unity 6 기능을 다루는 섹션이 포함되어 있습니다.

ScriptableObjects로 Unity에서 모듈형 게임 아키텍처 만들기 (Unity 6 에디션)

전문 개발자들이 생산에서 ScriptableObjects를 배포하기 위한 팁과 요령을 모은 이 전자책을 읽어보세요.

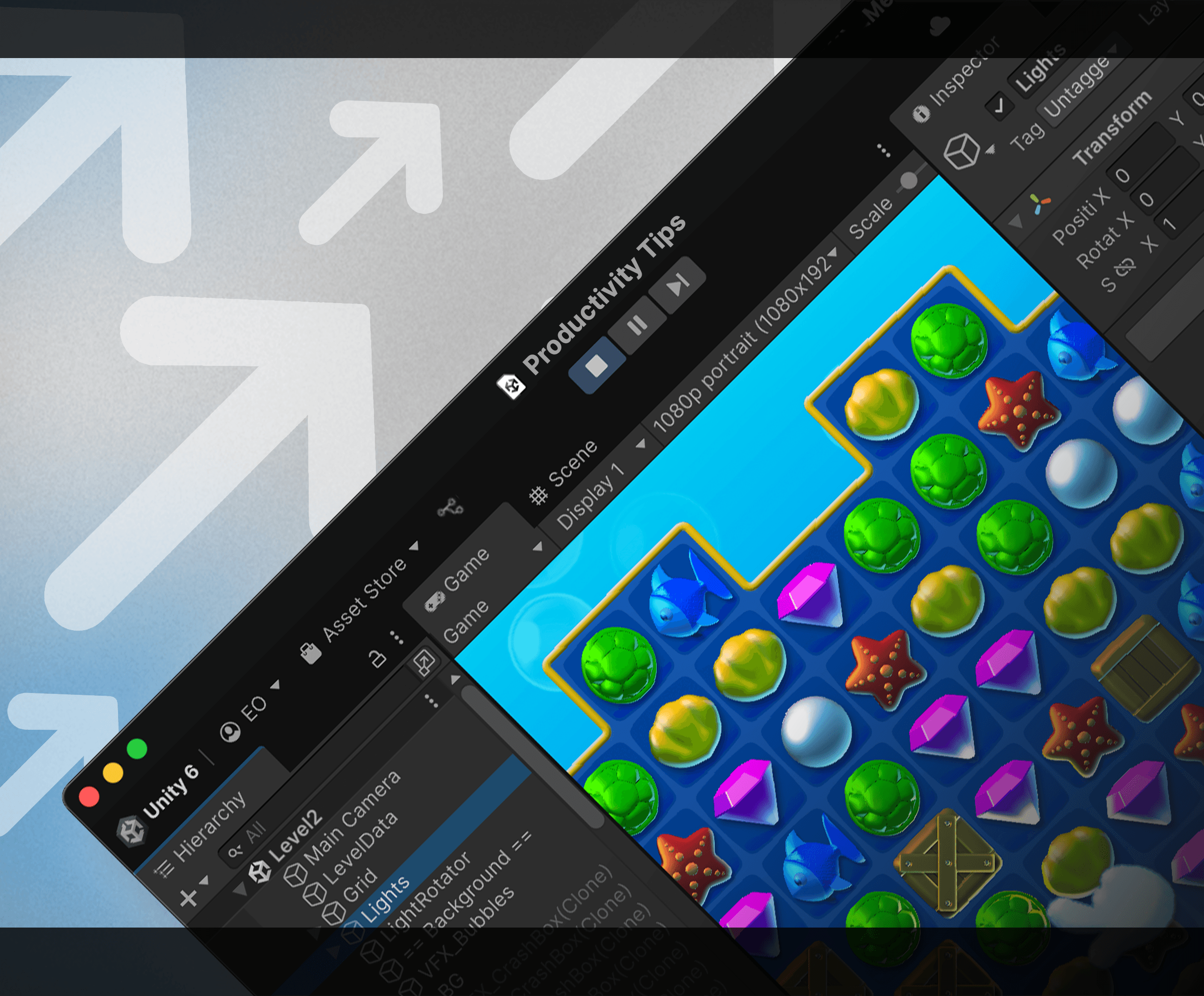

Unity 6으로 생산성 향상 팁

이 업데이트된 100페이지 이상의 가이드는 게임 개발의 모든 단계에서 워크플로를 가속화하는 팁을 제공합니다. Unity 개발자 경력이 몇 년이든 시작하는 단계이든 유용합니다.

아티스트를 위한 2D 게임 아트, 애니메이션 및 조명 (Unity 6.3 LTS 에디션)

우리의 인기 있는 2D 전자책이 이제 Unity 6.3 LTS에서 전문 2D 게임을 개발하기 위한 기술과 워크플로우를 포함하도록 업데이트되었습니다. 아트, 디자인, 애니메이션, 조명 및 VFX에 대한 모범 사례를 얻고, 2D 게임에서 3D 자산을 사용하는 방법에 대한 팁을 얻으세요.

프로그래머용 기술 전자책

- ScriptableObjects로 Unity에서 모듈형 게임 아키텍처 만들기 (Unity 6 에디션)

- Unity 6으로 생산성 향상 팁

- Unity 게임 프로파일링 종합 가이드 (Unity 6 에디션)

- 고급 Unity 개발자를 위한 DOTS 개념, 기능 및 샘플 소개 (Unity 6 에디션)

- C# 스타일 가이드로 깔끔하고 스케일링 가능한 게임 코드 작성하기(Unity 6 에디션)

- 고급 Unity 개발자를 위한 멀티플레이어 네트워킹 완벽 가이드

- Unity에서 모바일, XR 및 웹용 게임 성능 최적화 (Unity 6)

- Unity에서 콘솔 및 PC용 게임 성능 최적화 (Unity 6)

- 프로젝트 구성 및 버전 관리 베스트 프랙티스 (Unity 6)

- 고급 Unity 개발자를 위한 DOTS 소개

- Unity 게임 프로파일링 완벽 가이드

- C# 코드 스타일 가이드 만들기

- 모바일 게임 성능 최적화 (Unity 2020 LTS)

- Unity 게임 개발 실무 가이드

- 콘솔 및 PC 게임 성능 최적화

- Unity 2020 LTS로 생산성 향상

- 게임 개발자를 위한 버전 관리 및 프로젝트 조직 베스트 프랙티스

- 게임 프로그래밍 패턴으로 코딩 스킬 업그레이드

- 디자인 패턴 및 SOLID 원칙으로 코딩 스킬 업그레이드

- ScriptableObjects로 Unity에서 모듈형 게임 아키텍처 만들기

- 모바일 게임 성능 최적화 (Unity 2022 LTS)

- 콘솔 및 PC 게임 성능 최적화 (Unity 2022 LTS)

- Unity 2022 LTS에서 생산성을 높이기 위한 80가지 이상의 팁

아티스트 및 디자이너용 기술 전자책

- 아티스트를 위한 2D 게임 아트, 애니메이션 및 조명 (Unity 6.3 LTS 에디션)

- Unity 6의 고해상도 렌더 파이프라인의 조명 및 환경

- 고급 Unity 개발자를 위한 UI 툴킷 (Unity 6 에디션)

- 유니버설 렌더 파이프라인으로 자주 사용되는 셰이더와 시각 효과 만들기(Unity 6 에디션)

- 집중 탐구 가이드: Unity에서 고급 시각 효과 만들기(Unity 6 에디션)

- 고급 Unity 사용자를 위한 유니버설 렌더 파이프라인 소개 (Unity 6)

- Unity 애니메이션 집중 탐구 가이드

- Unity로 가상 및 혼합 현실 경험 제작

- 고해상도 렌더 파이프라인의 조명 및 환경(Unity 2022 LTS)

- Unity 고급 사용자를 위한 유니버설 렌더 파이프라인 소개(Unity 2022 LTS)

- Unity에서 게임 레벨 디자인 소개

- 유니버설 렌더 파이프라인을 사용한 인기 비주얼 효과 레시피

- Unity의 사용자 인터페이스 설계 및 구현

- Unity에서 고급 시각 효과를 만드는 최종 가이드

- 고해상도 렌더 파이프라인(HDRP) Unity 2021 LTS의 조명에 대한 최종 가이드

- 고해상도 렌더 파이프라인(HDRP) Unity 2020 LTS의 조명에 대한 최종 가이드

- 아티스트를 위한 2D 게임, 애니메이션 및 조명

- Unity 고급 사용자를 위한 유니버설 렌더 파이프라인

- Unity 게임 디자이너 플레이북

- 기술 아티스트를 위한 Unity: Unity 2020 LTS 에디션의 주요 도구 세트 및 워크플로우

- 기술 아티스트를 위한 Unity: 주요 도구 세트 및 워크플로우 (Unity 2021 LTS 에디션)

새 샘플 프로젝트

드래곤 크래셔 - UI 툴킷 샘플 프로젝트

이 공식 UI 툴킷 프로젝트는 런타임 게임을 위한 UI 툴킷 및 UI 빌더 워크플로우를 보여주는 게임 인터페이스를 제공합니다. 더 많은 유용한 팁을 위해 이 프로젝트와 그 동반 전자책을 탐색하세요.

QuizU - UI 툴킷 샘플

QuizU는 UI 툴킷을 사용하여 MVP, 상태 패턴, 메뉴 화면 관리 등 다양한 디자인 패턴과 프로젝트 아키텍처를 보여주는 공식 Unity 샘플입니다.

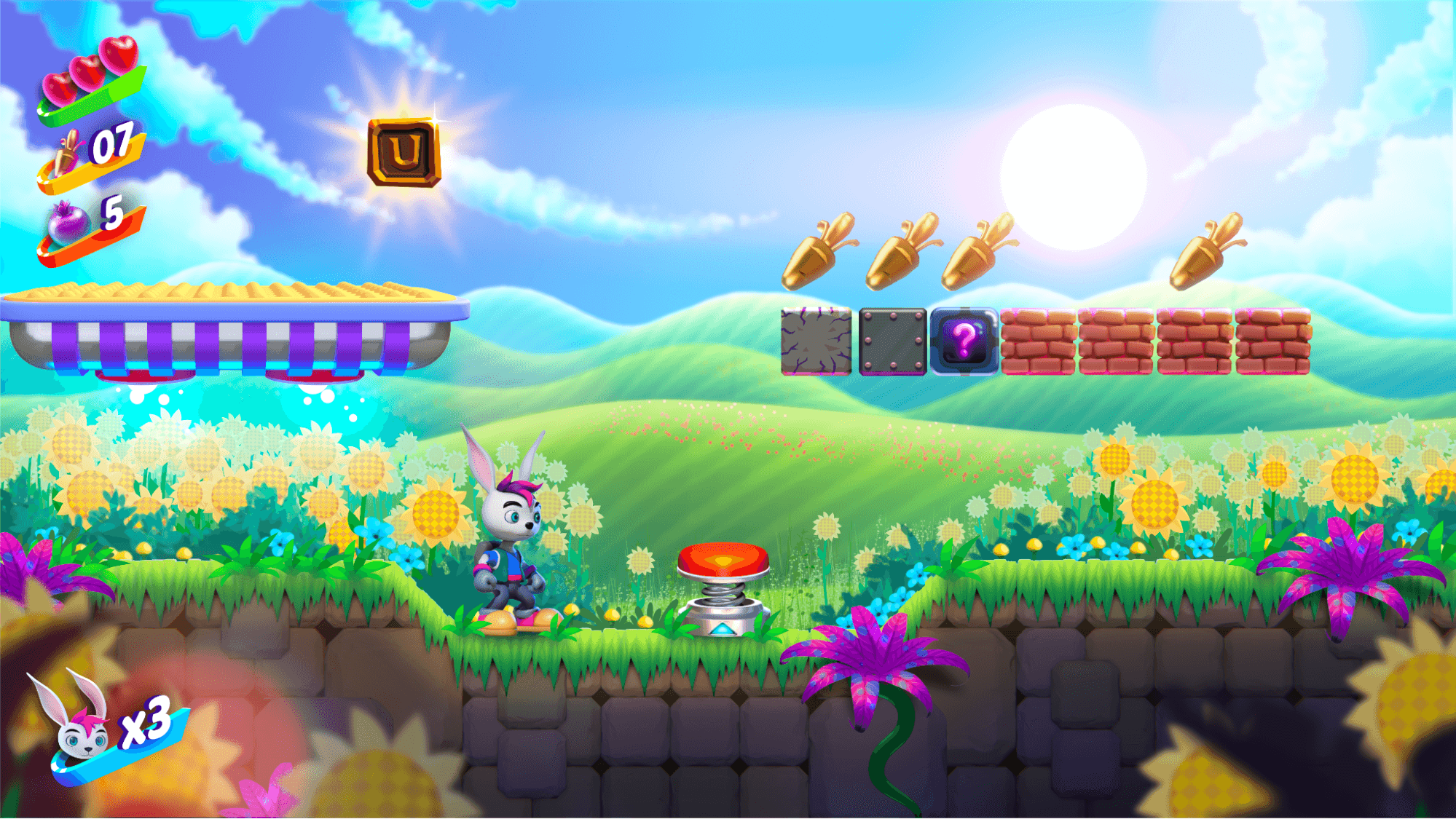

젬 헌터 매치 - 2D 샘플 프로젝트

Gem Hunter Match는 Unity 2022 LTS에서 유니버설 렌더 파이프라인(URP)의 2D 조명 및 시각 효과의 기능을 보여주는 공식 Unity 크로스 플랫폼 샘플 프로젝트입니다.