New features and updates for platforms and the Editor

What’s new

Here’s an overview of some of the key updates for different platforms and the Editor. For full details, check out the release notes.

Optimized Frame Pacing for Android

Developed in partnership with Google’s Android Gaming and Graphics team, Optimized Frame Pacing for Android provides consistent frame rates and smoother gameplay experience by enabling frames to be distributed with less variance.

Improved OpenGL Support

As a developer for mobile, you will also benefit from the improved OpenGL support. We have added OpenGL multithreading (iOS) to improve performance on low-end iOS devices that don’t support Metal (approximately 25% of iOS devices that run Unity games).

We have added OpenGL support for SRP batcher, for both iOS and Android, to improve CPU performance in projects that use the Lightweight Render Pipeline (LWRP).

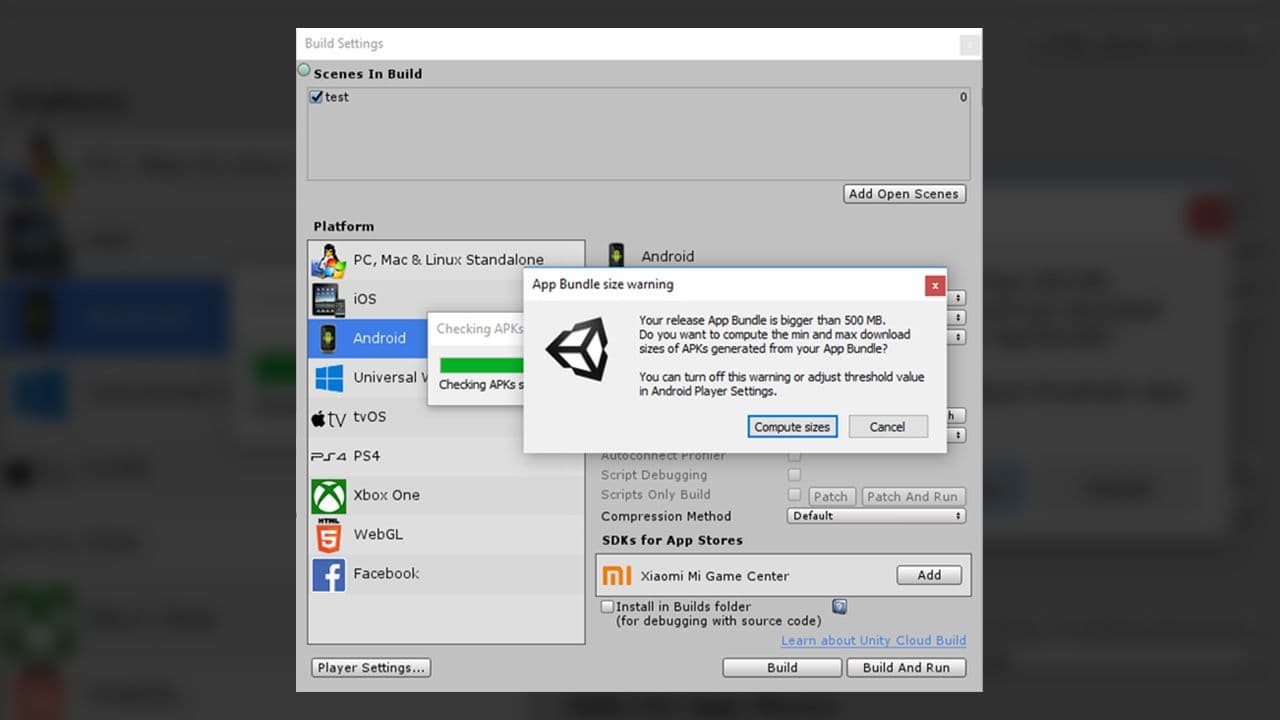

APK size check using Android App Bundle

This new function makes it easier to know the final application size for different targets.

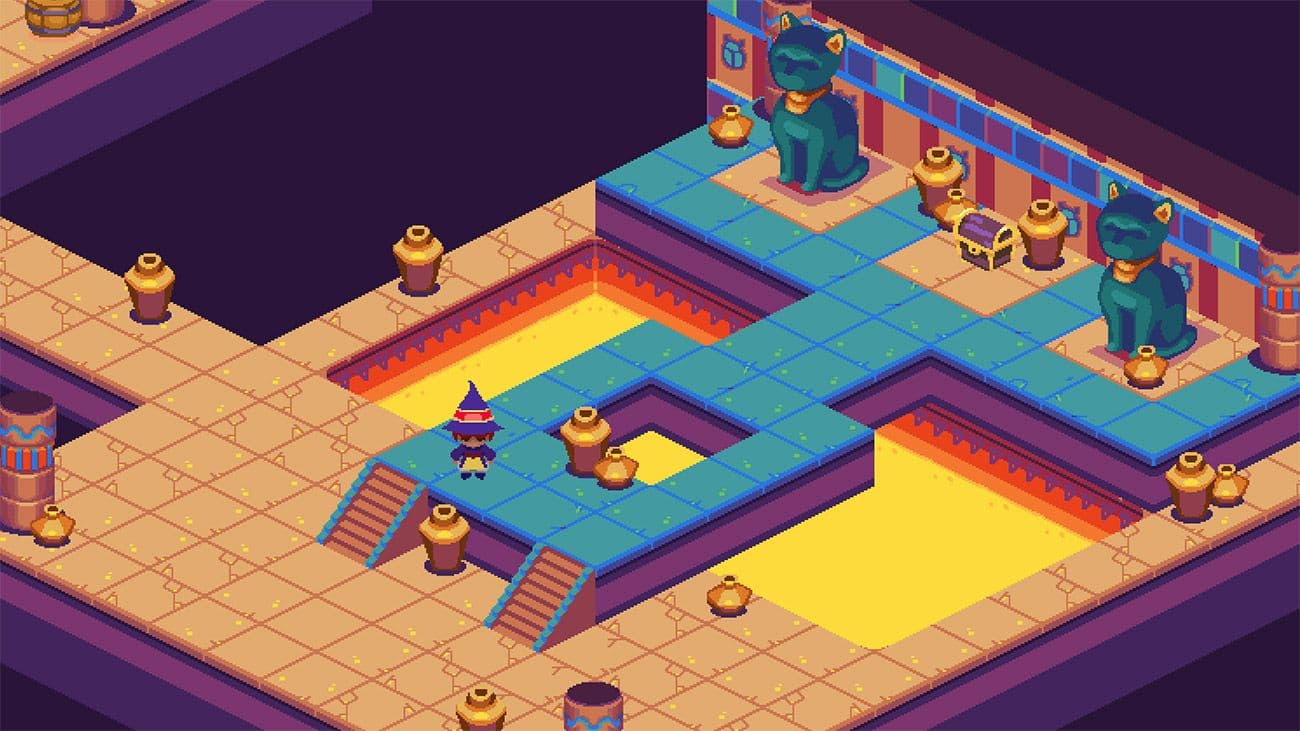

Editor features available via Package Manager

We continue to make the Editor leaner and more modular by making several existing features available as packages, including Unity UI, 2D Sprite Editor, and 2D Tilemap Editor. You can integrate, upgrade or remove them via the Package Manager.

SDK loading and management system for AR/VR

This revamped system for your target platforms helps streamline the development workflow. It’s in early Preview and we are looking for users to try out the new workflow and give us feedback.

AR Foundation updates

We have included support for face-tracking, 2D image-tracking, 3D object-tracking, environment probes and more. They are all in Preview.

- Face-Tracking (ARKit and ARCore): You can access face landmarks, a mesh representation of detected faces, and blend shape information, which can feed into a facial animation rig. The Face Manager takes care of configuring devices for face-tracking and creates GameObjects for each detected face.

- 2D Image-Tracking (ARKit and ARCore): This feature lets you detect 2D images in the environment. The Tracked Image Manager automatically creates GameObjects that represent all recognized images. You can change an AR experience based on the presence of specific images.

- 3D Object-Tracking (ARKit): You can import digital representations of real-world objects into your Unity experiences and detect them in the environment. The Tracked Object Manager creates GameObjects for each detected physical object to enable experiences to change based on the presence of specific real-world objects. This functionality can be great for building educational and training experiences, in addition to games.

- Environment Probes (ARKit): This detects lighting and color information in specific areas of the environment, which helps enable 3D content to blend seamlessly with the surroundings. The Environment Probe Manager uses this information to automatically create cubemaps in Unity.

- Motion Capture (ARKit): This captures people’s movements. The Human Body Manager detects 2D (screen-space) and 3D (world-space) representations of humans recognized in the camera frame.

- People Occlusion (ARKit): This enables more realistic AR experiences, blending digital content into the real world. The Human Body Manager uses depth segmentation images to determine if someone is in front of the digital content.

- Collaborative Session (ARKit): This allows for multiple connected ARKit apps to continuously share their understanding of the environment, enabling multiplayer games and collaborative applications.

VR support for HDRP

HDRP now includes support for your VR projects (in Preview). This support is currently limited to Windows 10 and Direct3D11 devices, and must use Single Pass Stereo rendering for VR in HDRP.

Vuforia support

Vuforia support has been migrated from Player Settings to the Package Manager, giving you access to Vuforia Engine 8.3, the latest version.