Build great

Dernières actualités

Développement de jeux, unifié.

Développer n'importe quoi

Unity est un moteur de jeu éprouvé, qui dispose de l'une des plus grandes communautés et d'un écosystème gigantesque pour tous les cas d'utilisation.

Déployer partout

Conçu pour toutes les principales plateformes. Déployez votre jeu sur ordinateur, iOS, Android, Nintendo Switch™, PlayStation®, Xbox®, Meta Quest, le Web, Apple Vision Pro, etc. Unity offre des informations intégrées qui révèlent ce que les joueurs apprécient vraiment afin de vous aider à optimiser les jeux qui durent².

Développez votre base de joueurs

La croissance ne se limite pas aux téléchargements : il s'agit d'identifier avec précision les joueurs qui vont adorer votre jeu afin de générer une croissance durable et prévisible.

Développez votre économie

Stimulez la croissance tout en protégeant l'expérience des joueurs. Concevez une stratégie de Monetization qui s'intègre naturellement à votre gameplay. Unity offre la flexibilité nécessaire pour optimiser la valeur à long terme grâce à une large gamme d'outils commerciaux.

Tout le monde peut créer avec Unity

Culte de l'Agneau

Chevalier creux : Silksong

Espace de saut

De l'indépendant à la franchise, lancez-vous et itérez rapidement

Itérez rapidement en C#, créez des jeux 2D et 3D dans tous les genres et styles imaginables, et profitez de la simplicité du glisser-déposer. Développez pour plus de 25 plateformes et bénéficiez de l'aide à chaque étape de l'une des communautés de développement de jeux vidéo les plus prospères au monde.

Apprendre et discuter

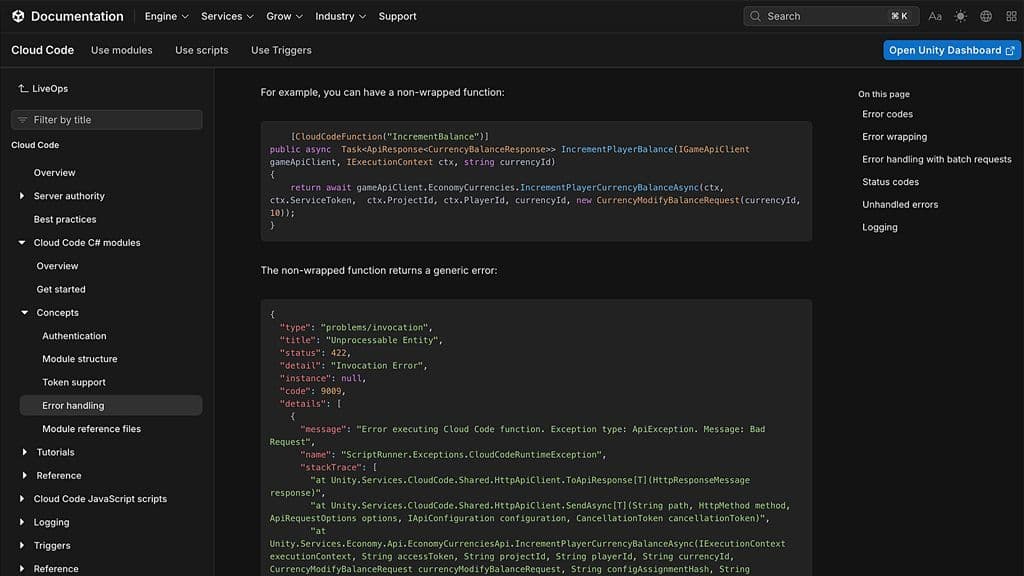

Documentation

Exploitez toute la puissance de Unity grâce à un manuel détaillé et à une référence API de script. Trouvez des réponses, approfondissez vos connaissances et améliorez vos projets.

Apprendre avec Unity

Commencez dès aujourd'hui avec Unity Learn, votre parcours gratuit pour maîtriser la 3D en temps réel. Suivez des cours et des tutoriels à l'aide de projets pratiques. Gagnez des badges et transformez vos idées en résultats playables, prêts à être intégrés à votre portfolio.

Discussions

Tutoriels et cours

Unity s'adresse à tous, avec des formules adaptées à vos ambitions.

Des applications innovantes, dans tous les secteurs

Unity est également à l'origine de nombreuses applications 3D parmi les plus innovantes dans les domaines de l'automobile, de la fabrication, de la vente au détail et des sciences médicales.

Crédits créateurs

- Chevalier creux : Silksong | Team Cherry ; Tiny Bookshop | neoludic games, Skystone Games, 2P Games ; LEGO® Voyagers | Light Brick Studio, Annapurna Interactive ; PEAK | Aggro Crab, Landfall ; R.E.P.O. | semiwork ; Tainted Grail: the Fall of Avalon | Questline, Awaken Realms ; CloverPit | Panik Arcade, Future Friends Games ; Blue Prince | Dogubomb, Raw Fury ; Megabonk | vedinad ; Schedule 1 | TVGS ; Deep Rock Galactic : Survivor | Funday Games, Ghost Ship Publishing ; Jump Space | Keepsake Games ;

- Nintendo Switch est une marque déposée de Nintendo.