Создайте будущее в 3D

3D платформа, которая объединяет каждую команду

Креативный отдел

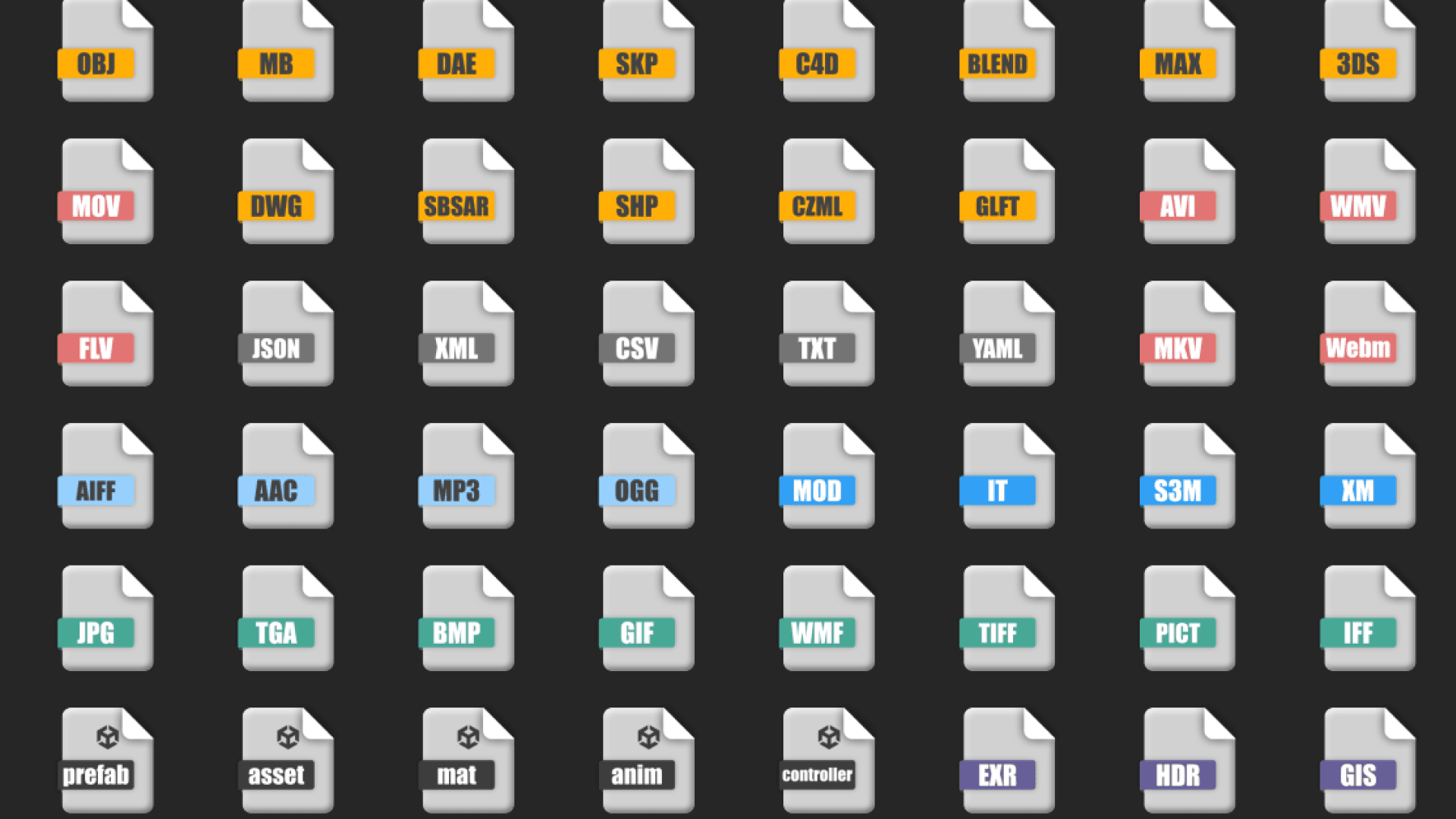

Подключиться

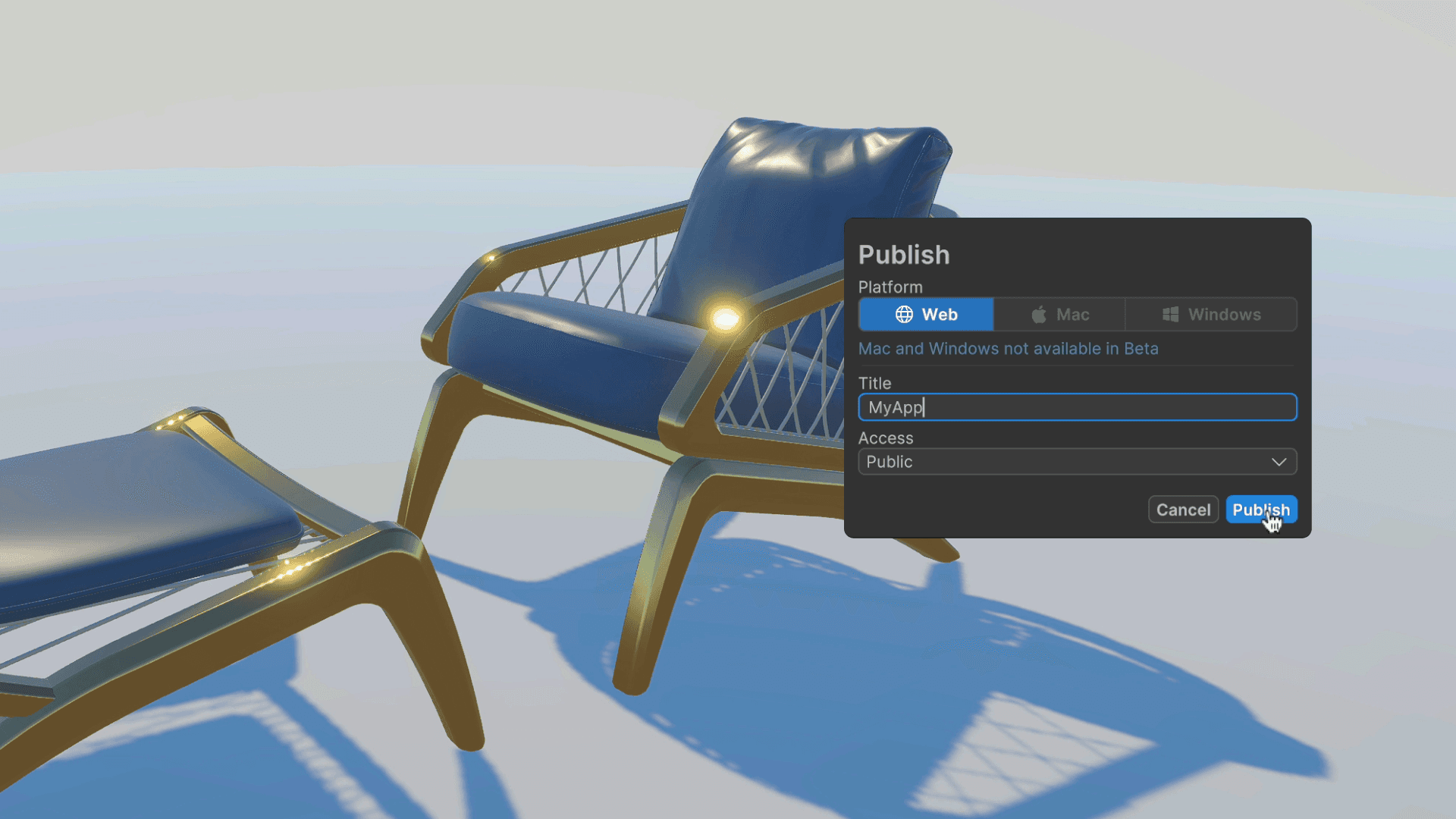

Развертывание

Сотрудничайте

Познакомьтесь с командами, переосмысляющими, как воспринимается мир

Одна платформа, бесконечные приложения

Прототипирование

Оживите идеи в виде интерактивных 3D-моделей за часы вместо недель, чтобы вы могли тестировать, итеративно дорабатывать и проверять быстрее, чем когда-либо.

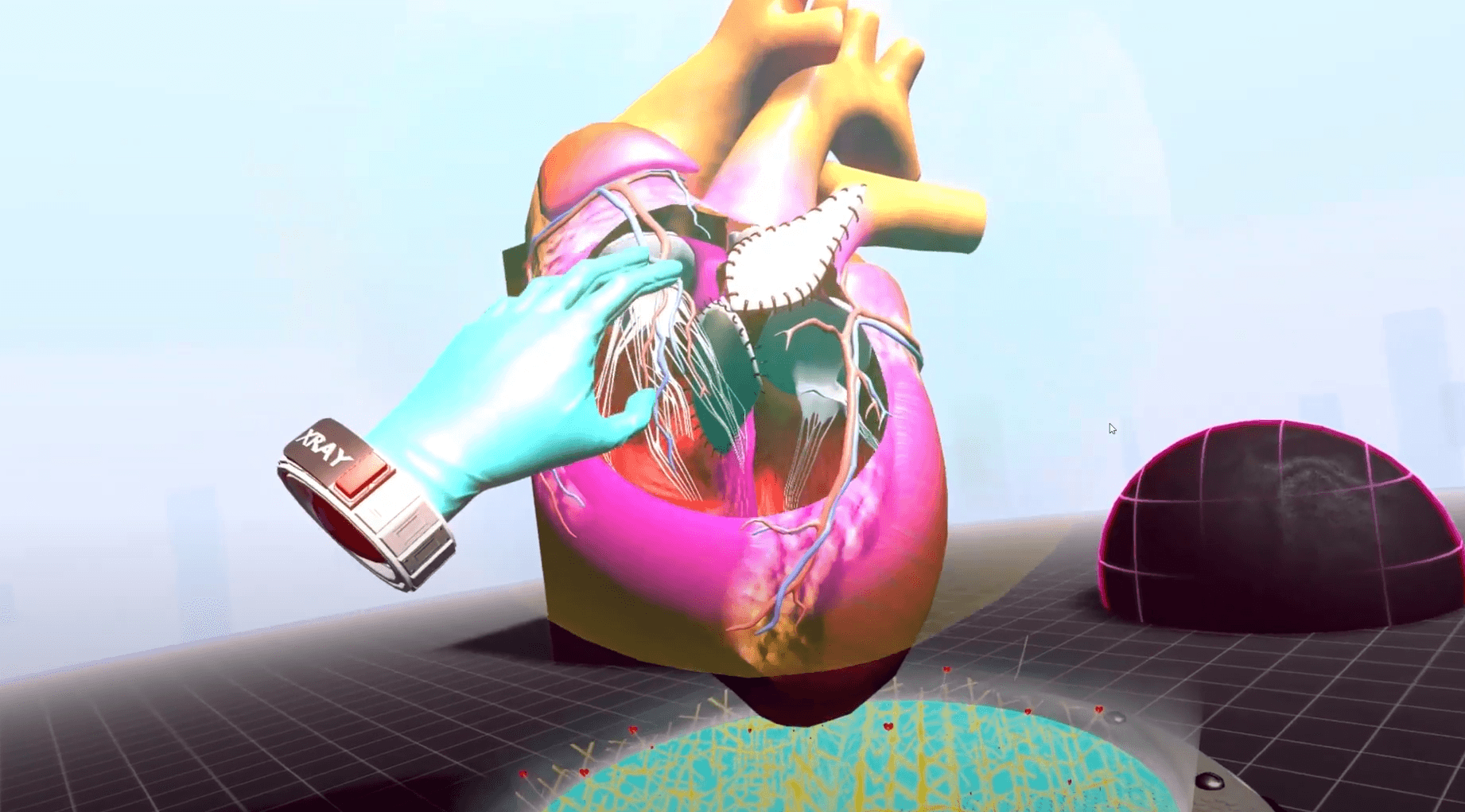

Тренировка

Оснастите команды погружающими симуляциями для повышения безопасности и готовности перед началом производства.

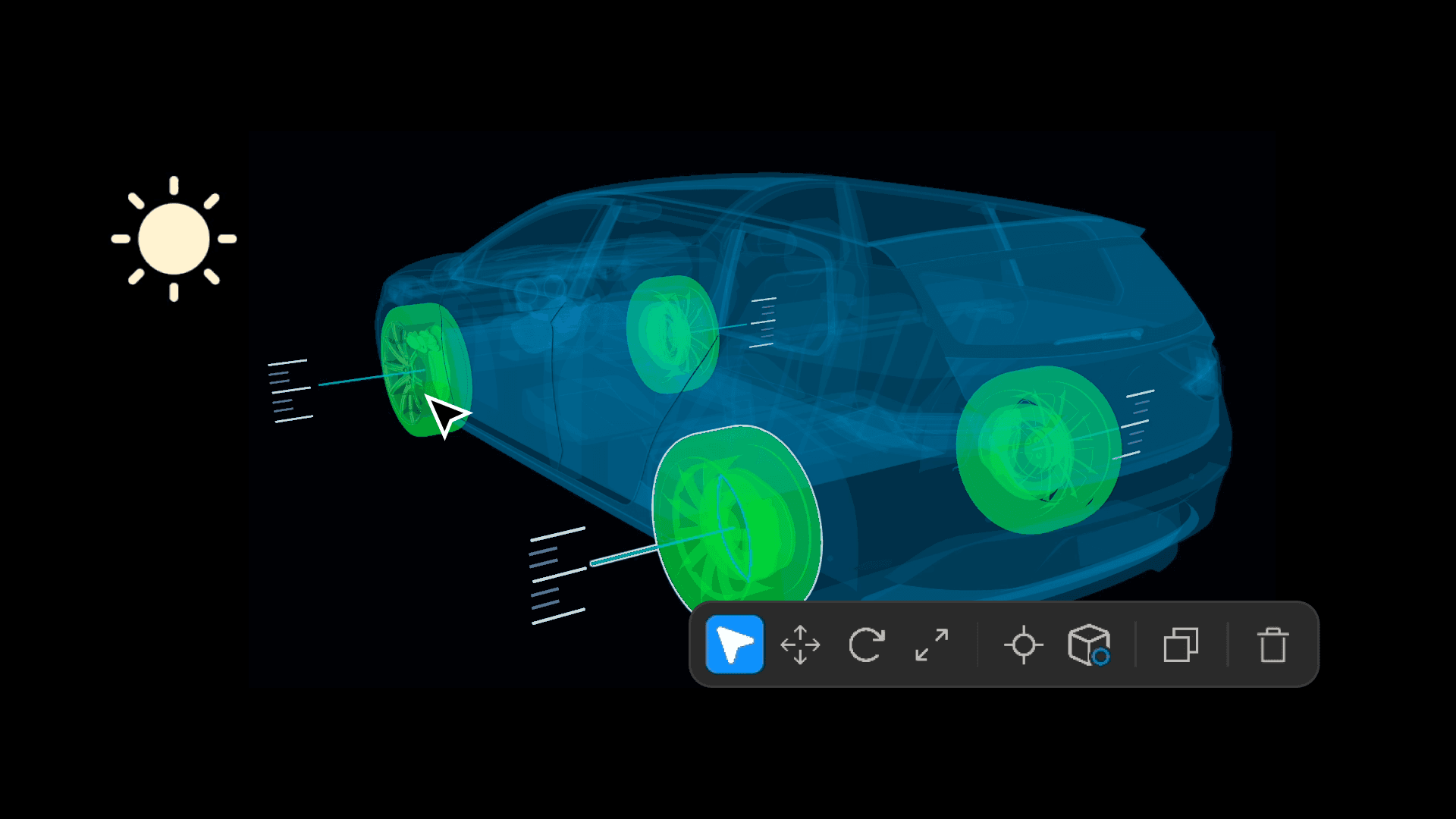

Digital Twins

Создавайте 3D-реплики физических систем в реальном времени, чтобы выявлять проблемы, оптимизировать производительность и сокращать отходы.

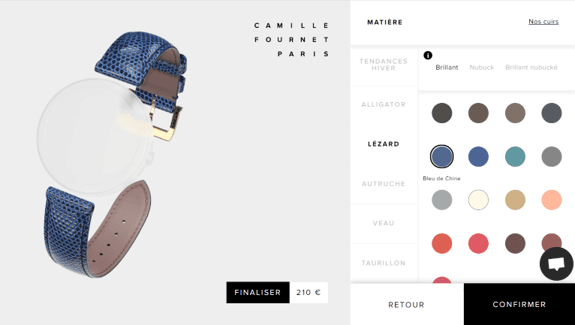

Конфигураторы продуктов

Позвольте клиентам исследовать и настраивать продукты, повышая вовлеченность и уверенность в каждой покупке.

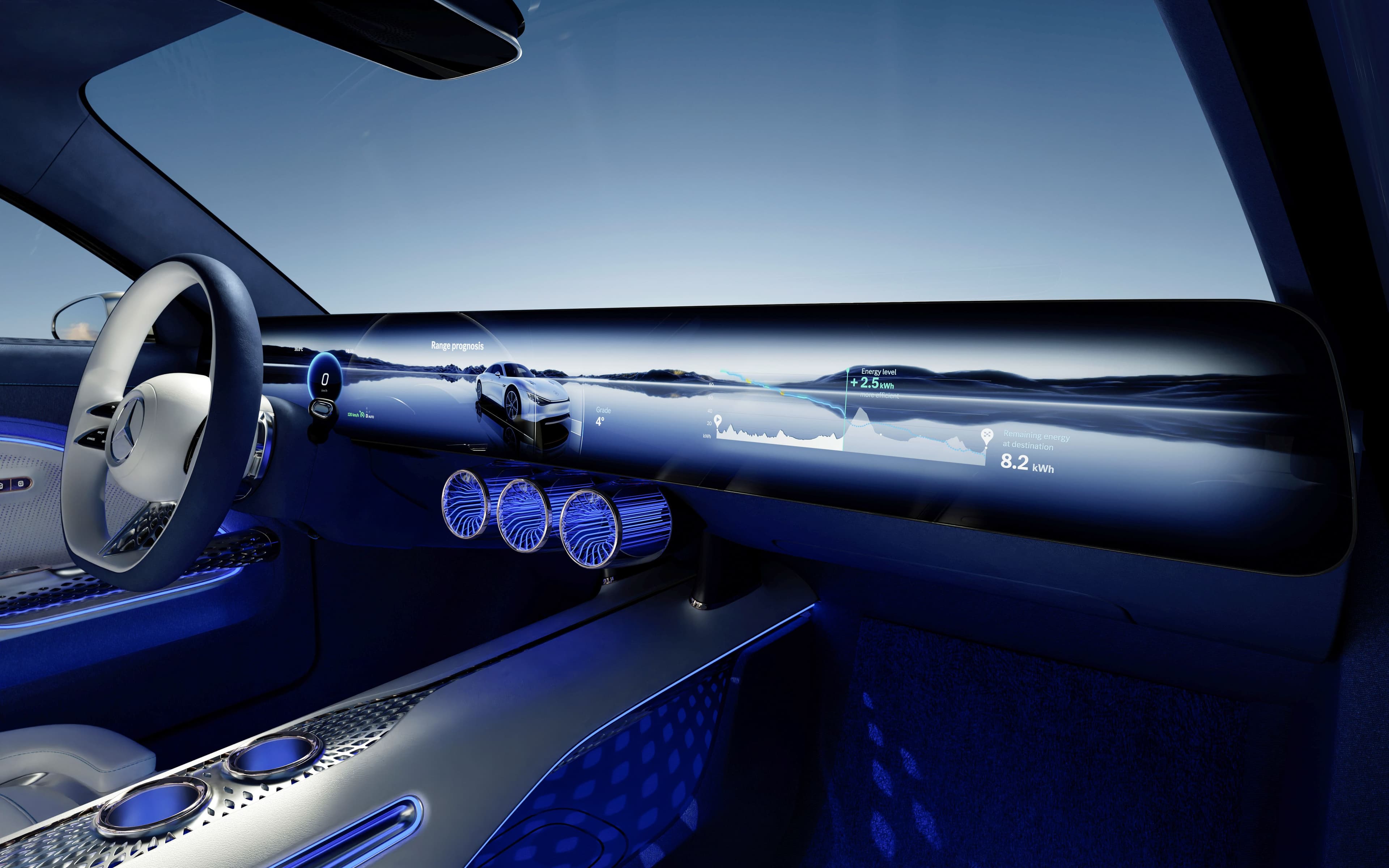

Человеко-машинные интерфейсы (HMI)

Проектируйте, тестируйте и уточняйте опыт операторов, обеспечивая удобство и эффективность до того, как они попадут на производственную линию.

Unity для программы партнерства в индустрии

Наша партнерская программа адаптирована к вашим потребностям, независимо от того, предоставляете ли вы креативные консультационные услуги или разрабатываете программные решения на базе Unity.

1. На сентябрь 2023 года. Источник: внутренние ресурсы Unity.