Light baked Prefabs и другие советы для получения 60 fps на телефонах низкого класса

Что вы получите с этой страницы: советы Мишель Мартин, инженера-программиста MetalPop Games, о том, как оптимизировать игры для различных мобильных устройств, чтобы охватить как можно больше потенциальных игроков.

В своей мобильной стратегической игре Galactic Colonies компания MetalPop Games столкнулась с проблемой, как сделать так, чтобы игроки могли строить огромные города на своих низкокачественных устройствах без падения частоты кадров или перегрева устройства. Посмотрите, как им удалось найти баланс между красивым визуальным оформлением и надежной производительностью.

Строительство городов на устройствах низкого класса

Какими бы мощными ни были мобильные устройства, на них все еще сложно запускать большие и хорошо выглядящие игровые окружения с хорошей частотой кадров. Достижение стабильных 60 кадров в секунду в крупномасштабном 3D-окружении на старом мобильном устройстве может оказаться непростой задачей.

Как разработчики, мы могли бы просто нацелиться на телефоны высокого класса и предположить, что у большинства игроков будет достаточно оборудования, чтобы плавно запустить нашу игру. Но это приведет к блокировке огромного количества потенциальных игроков, поскольку все еще существует множество старых устройств. Это все потенциальные клиенты, которых вы не хотите исключать.

В нашей игре, Galactic Colonies, игроки колонизируют чужие планеты и строят огромные колонии, состоящие из большого количества отдельных зданий. Если в небольших колониях может быть всего десяток зданий, то в больших - сотни.

Наша цель на этапе разработки зданий выглядела следующим образом:

- мы хотим огромные карты с сотнями зданий;

- Мы хотим работать быстро на более дешевых и/или старых мобильных устройствах

- мы хотим приятное освещение и тени;

- мы хотим, чтобы разработка шла просто и предсказуемо.

Задачи освещения на мобильных устройствах

Хорошее освещение в игре - залог того, что 3D-модели будут выглядеть великолепно. В Unity это очень просто: установите уровень, разместите динамическое освещение - и готово. А если вам нужно следить за производительностью, просто запеките весь свет и добавьте SSAO и другие эффекты с помощью стека постобработки. Вот так, отправляйте!

Для мобильных игр вам понадобится хороший набор трюков и обходных путей для настройки освещения. Например, если вы не ориентируетесь на устройства высокого класса, не стоит использовать эффекты постобработки. Аналогично, большая сцена с динамическим освещением также сильно снизит частоту кадров.

Освещение в реальном времени может быть дорогостоящим на настольном компьютере. На мобильных устройствах ограничения по ресурсам еще жестче, и вы не всегда можете позволить себе все те приятные функции, которые хотели бы иметь.

Легкая выпечка на помощь

Поэтому не стоит разряжать батареи телефонов пользователей больше, чем это необходимо, используя слишком много причудливых огней в сцене.

Если постоянно нагружать аппаратное обеспечение, телефон будет перегреваться и, как следствие, снижать скорость работы, чтобы защитить себя. Чтобы избежать этого, можно запечь каждый свет, который не отбрасывает реальных теней.

Процесс запекания света - это предварительный расчет бликов и теней для (статичной) сцены, информация о которых затем сохраняется в карте света. После этого рендерер знает, где сделать модель светлее или темнее, создавая иллюзию света.

Рендеринг таким образом происходит быстро, потому что все дорогостоящие и медленные вычисления света выполняются в автономном режиме, а во время выполнения рендереру (шейдеру) нужно просто посмотреть результат в текстуре.

Компромисс заключается в том, что вам придется поставлять дополнительные текстуры lightmap, что увеличит размер сборки и потребует дополнительной текстурной памяти во время выполнения. Вы также потеряете немного места, потому что вашим сеткам потребуются lightmap UVs и они станут немного больше. Но в целом вы получите огромный прирост скорости.

Но для нашей игры это был не вариант, так как игровой мир строится игроком в реальном времени. Тот факт, что постоянно открываются новые регионы, добавляются новые здания или модернизируются существующие, не позволяет эффективно использовать свет. Простое нажатие кнопки Bake не сработает, если у вас динамичный мир, который может постоянно меняться под воздействием игрока.

Поэтому мы столкнулись с рядом проблем, возникающих при запекании света для высокомодульных сцен.

Трубопровод для легкой выпечки Prefabs

Данные о запекании света в Unity хранятся и напрямую связаны с данными сцены. Это не проблема, если у вас есть отдельные уровни и готовые сцены, а также всего несколько динамических объектов. Вы можете предварительно запечь освещение и готово.

Очевидно, что это не работает, если вы создаете уровни динамически. В игре, посвященной строительству города, мир не создается заранее. Вместо этого она в значительной степени собирается динамически и на лету - игрок сам решает, что и где строить. Обычно это делается путем инстанцирования префабов в любом месте, где игрок решит что-то построить.

Единственное решение этой проблемы - хранить все необходимые данные о запекании света в префабе, а не в сцене. К сожалению, не существует простого способа скопировать данные о том, какой лайтмап использовать, его координаты и масштаб в префаб.

Лучший подход к созданию надежного конвейера для работы со светлыми запеченными префабами - это создание префабов в другой, отдельной сцене (фактически, в нескольких сценах), а затем загрузка их в основную игру, когда это необходимо. Каждый модульный элемент запекается в свету и затем загружается в игру, когда это необходимо.

Внимательно изучите, как работает light baking в Unity, и вы увидите, что рендеринг light baked mesh - это просто применение к нему другой текстуры и осветление, затемнение (или иногда окрашивание) сетки. Все, что вам нужно, - это текстура lightmap и UV-координаты, которые создаются Unity в процессе запекания света.

Во время запекания света процесс Unity создает новый набор UV-координат (которые указывают на текстуру lightmap), а также смещение и масштаб для отдельного меша. При повторном запекании света эти координаты каждый раз меняются.

Как использовать ультрафиолетовые каналы

Чтобы найти решение этой проблемы, необходимо понять, как работают УФ-каналы и как их лучше использовать.

Каждая сетка может иметь несколько наборов UV-координат (в Unity они называются UV-каналами). В большинстве случаев достаточно одного набора UVs, поскольку различные текстуры (Diffuse, Spec, Bump и т. д.) хранят информацию в одном и том же месте изображения.

Но когда объекты имеют общую текстуру, например лайтмап, и им нужно искать информацию о конкретном месте в одной большой текстуре, часто нет возможности обойтись без добавления другого набора UV для использования с этой общей текстурой.

Недостатком множественных UV-координат является то, что они занимают дополнительную память. Если вы используете два набора UV вместо одного, вы удваиваете количество UV-координат для каждой из вершин сетки. Каждая вершина теперь хранит два числа с плавающей точкой, которые загружаются в GPU при рендеринге.

Создание префабов

Unity генерирует координаты и световую карту, используя обычную функцию запекания света. Движок запишет UV-координаты для лайтмапа во второй UV-канал модели. Важно отметить, что первичный набор UV-координат не может быть использован для этого, поскольку модель должна быть развернута.

Представьте себе коробку с одинаковой текстурой на каждой из сторон: Отдельные стороны коробки имеют одинаковые UV-координаты, поскольку используют одну и ту же текстуру. Но это не сработает для объекта, отображаемого светом, поскольку на каждую сторону коробки свет и тень попадают по отдельности. Каждой стороне нужно свое пространство на карте света с индивидуальными данными освещения. Таким образом, возникает необходимость в новом наборе ультрафиолетовых лучей.

Чтобы создать новый префаб, запеченный в свете, нам нужно сохранить текстуру и ее координаты, чтобы они не потерялись, и скопировать их в префаб.

После того как запекание света завершено, мы запускаем скрипт, который проходит по всем сеткам в сцене и записывает UV-координаты в реальный UV2-канал сетки с применением значений для смещения и масштабирования.

Код для изменения сетки относительно прост (см. пример ниже).

Если быть более точным: Это делается с копией сетки, а не с оригиналом, потому что в процессе запекания мы будем проводить дальнейшую оптимизацию сетки.

Копии автоматически генерируются, сохраняются в Prefab и получают новый материал с пользовательским шейдером и созданной световой картой. При этом наши исходные сетки остаются нетронутыми, а запеченные в свете префабы сразу готовы к использованию.

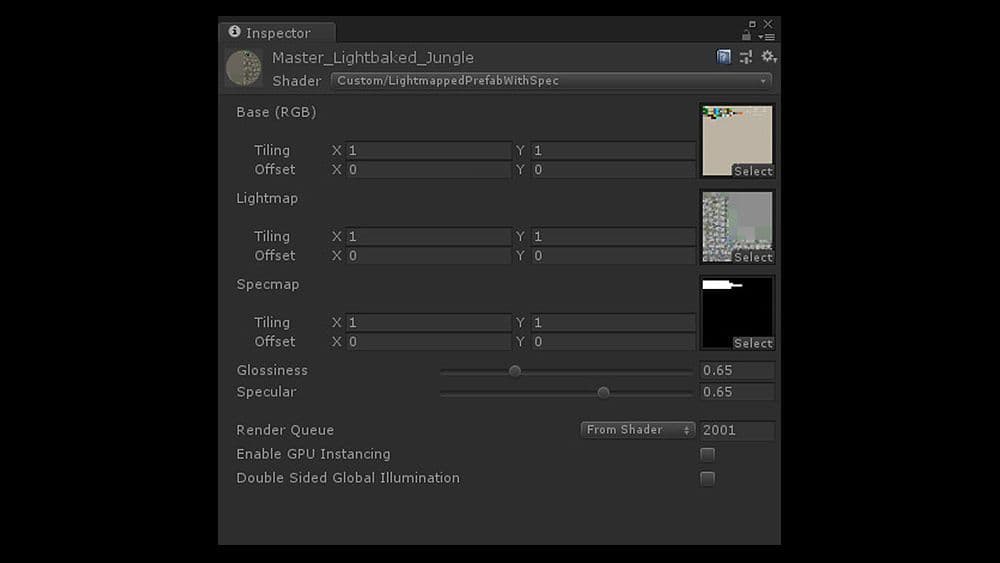

Пользовательский шейдер карты света

Это делает рабочий процесс очень простым. Чтобы изменить стиль и внешний вид графики, просто откройте соответствующую сцену, внесите все изменения, пока не останетесь довольны, а затем запустите автоматический процесс запекания и копирования. Когда этот процесс завершится, игра начнет использовать обновленные префабы и сетки с обновленным освещением.

Фактическая текстура лайтмапа добавляется пользовательским шейдером, который применяет лайтмап в качестве второй текстуры света к модели во время рендеринга. Шейдер очень простой и короткий, и помимо применения цвета и карты освещенности, он рассчитывает дешевый, фальшивый эффект спекуляции/блеска.

Вот код шейдера; на изображении выше - настройка материала с использованием этого шейдера.

Настройка и статическое пакетирование

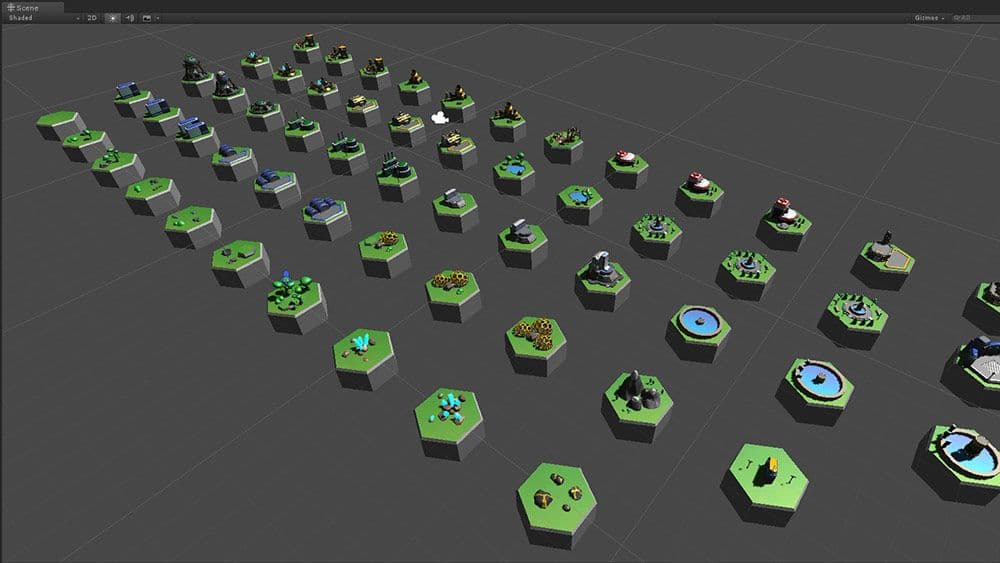

В нашем случае у нас есть четыре различные сцены со всеми установленными префабами. В нашей игре представлены различные биомы, такие как тропический, ледяной, пустынный и т. д., и мы разделили наши сцены соответствующим образом.

Все префабы, используемые в данной сцене, имеют единую карту освещенности. Это означает одну дополнительную текстуру, в дополнение к тому, что префабы имеют только один материал. В результате мы смогли отрисовать все модели как статические и выполнить пакетный рендеринг почти всего мира всего за один вызов рисования.

Сцены с запеканием света, в которых установлены все наши тайлы/здания, имеют дополнительные источники света для создания локальных бликов. Вы можете разместить столько света в сценах настройки, сколько вам нужно, поскольку все они все равно будут запекаться.

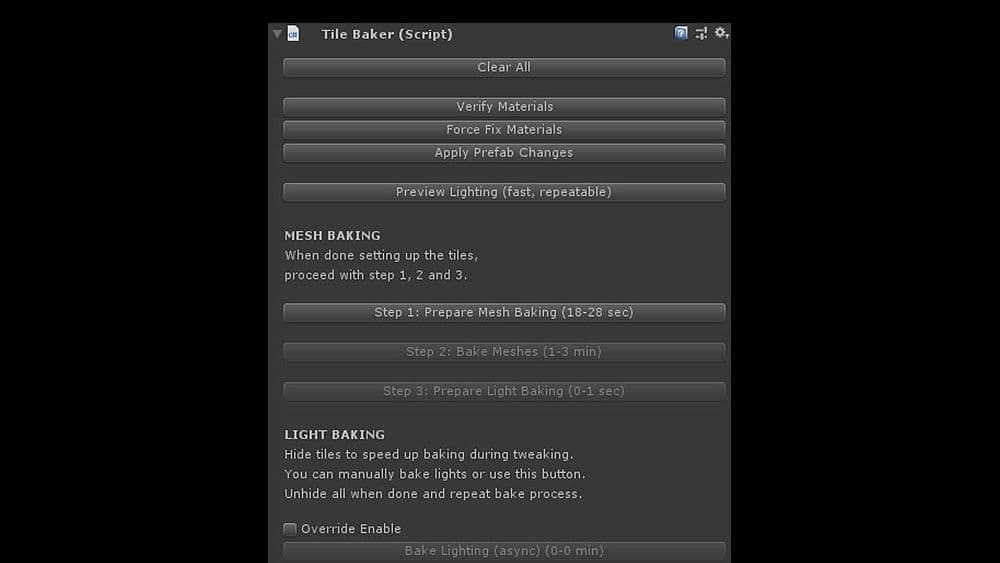

Пользовательский диалог пользовательского интерфейса

Процесс запекания происходит в диалоговом окне пользовательского интерфейса, который выполняет все необходимые действия. Это гарантирует, что:

- Каждой модели назначен нужный материал

- Все, что не нужно запекать во время процесса, скрыто

- Сетки объединяются/запекаются

- Копируются UV-файлы и создаются префабы

- Все правильно названо и проверены необходимые файлы из системы контроля версий.

Префабы с соответствующими названиями создаются из сетки, чтобы код игры мог загружать и использовать их напрямую. Мета-файлы также изменяются во время этого процесса, чтобы ссылки на сетки префабов не потерялись.

Этот рабочий процесс позволяет нам настраивать здания так, как мы хотим, освещать их так, как нам нравится, а затем позволить сценарию позаботиться обо всем.

Когда мы переключаемся обратно на нашу основную сцену и запускаем игру, она просто работает - не требуется никакого ручного вмешательства или других обновлений.

Фальшивый свет и динамические насадки

Один из очевидных недостатков сцены, в которой 100 % освещения заранее запечено, заключается в том, что в ней сложно создать какие-либо динамические объекты или движение. Все, что отбрасывает тень, требует расчета света и тени в реальном времени, чего мы, конечно, хотели бы избежать.

Но без движущихся объектов 3D-среда будет казаться просто статичной и мертвой.

Конечно, мы были готовы смириться с некоторыми ограничениями, поскольку нашим главным приоритетом было достижение хорошего визуального оформления и быстрого рендеринга. Чтобы создать впечатление живой, движущейся космической колонии или города, не так уж много предметов должно быть на самом деле. И большинство из них не обязательно требовали теней, или, по крайней мере, их отсутствие не было заметно.

Мы начали с того, что разделили все городские строительные блоки на два отдельных префаба. Статическая часть, содержащая большинство вершин, все сложные биты нашей сетки, и динамическая, содержащая как можно меньше вершин.

Динамические части префаба - это анимированные фрагменты, расположенные поверх статических. Они не запекаются, и мы использовали очень быстрый и дешевый шейдер искусственного освещения, чтобы создать иллюзию, что объект динамически освещается.

Объекты также либо не имеют тени, либо мы создали фальшивую тень как часть динамического бита. Большинство наших поверхностей плоские, поэтому в нашем случае это не было большим препятствием.

На динамичных битах нет теней, но они едва заметны, если только вы не умеете их искать. Освещение динамических префабов также ненастоящее - в реальном времени оно вообще отсутствует.

Первым дешевым способом, который мы использовали, было жесткое кодирование положения нашего источника света (солнца) в шейдере искусственного освещения. Это на одну переменную меньше, которую шейдеру нужно искать и динамически заполнять из мира.

Всегда быстрее работать с константой, чем с динамическим значением. В результате мы получили базовое освещение, светлые и темные стороны сетки.

Спекулярный/глянцевый

Чтобы сделать все более блестящим, мы добавили в шейдеры для динамических и статических объектов расчет фальшивого спекуляра/блеска. Спекулярные отражения помогают создать металлический вид и передать кривизну поверхности.

Поскольку спекулярный блик - это форма отражения, для его правильного расчета необходим угол наклона камеры и источника света относительно друг друга. Когда камера перемещается или поворачивается, зеркальное отражение меняется. Любой шейдерный расчет потребует доступа к положению камеры и каждому источнику света в сцене.

Однако в нашей игре у нас только один источник света, который мы используем для спекулярного: солнце. В нашем случае солнце никогда не движется и может считаться направленным светом. Мы можем значительно упростить шейдер, используя только один единственный свет и предполагая для него фиксированное положение и угол падения.

Еще лучше то, что камера в Galactic Colonies показывает сцену с видом сверху вниз, как в большинстве игр про строительство городов. Камеру можно немного наклонять, приближать и отдалять, но она не может вращаться вокруг оси вверх.

В целом, он всегда смотрит на окружающую среду сверху. Чтобы сымитировать дешевый спекулярный вид, мы сделали вид, что камера полностью неподвижна, а угол между камерой и светом всегда один и тот же.

Таким образом, мы снова можем жестко закодировать постоянное значение в шейдере и добиться дешевого эффекта блеска.

Использование фиксированного угла для спекуляра, конечно, технически неправильно, но практически невозможно заметить разницу, если угол камеры не сильно меняется.

Для игрока сцена все равно будет выглядеть правильно, в чем и заключается смысл освещения в реальном времени.

Освещение окружения в видеоиграх в реальном времени всегда было и остается задачей визуального восприятия, а не физического моделирования.

Поскольку почти все наши сетки имеют один материал, а большинство деталей поступает от карты света и вершин, мы добавили текстурную карту спекуляра, чтобы указать шейдеру, когда и где применить значение спекуляра и насколько сильное. Доступ к текстуре осуществляется через основной UV-канал, поэтому она не требует дополнительного набора координат. А поскольку в них мало деталей, они имеют очень низкое разрешение и почти не занимают места.

Для некоторых небольших динамических битов с малым количеством вершин мы можем даже использовать автоматическое динамическое пакетирование Unity, что еще больше ускорит рендеринг.

Стопка светлых печеных префабов

Все эти запеченные тени иногда могут создавать новые проблемы, особенно при работе с относительно модульными зданиями. В одном случае у нас был склад, который игрок мог построить, и который отображал тип хранящихся в нем товаров на самом здании.

Это создает проблемы, поскольку у нас есть светлый запеченный объект поверх светлого запеченного объекта. Легкая выпечка!

Мы подошли к решению проблемы, используя еще один дешевый трюк:

- Поверхность, на которую добавляется дополнительный объект, должна была быть плоской и иметь определенный оттенок серого цвета, соответствующий базовому зданию.

- Благодаря этому маневру мы получили возможность запекать объекты на небольшой плоской поверхности и располагать их поверх нужной площади с небольшим смещением.

- Источники света, блики, цветное свечение и тени — все это запекалось в плитку.

Действуют некоторые ограничения

Создание и запекание префабов таким образом позволяет нам создавать огромные карты с сотнями зданий, сохраняя при этом очень низкое количество вызовов. Весь наш игровой мир более или менее отрисовывается только одним материалом, и мы находимся в точке, где пользовательский интерфейс использует больше вызовов рисования, чем наш игровой мир. Чем меньше различных материалов приходится рендерить Unity, тем лучше для производительности вашей игры.

Это оставляет нам достаточно места, чтобы добавить в наш мир больше вещей, таких как частицы, погодные эффекты и другие элементы, радующие глаз.

Таким образом, даже игроки со старыми устройствами смогут строить большие города с сотнями зданий, сохраняя стабильные 60 кадров в секунду.