Unity PlayerLoop содержит функции для взаимодействия с ядром игрового движка. Эта структура включает в себя ряд систем, которые обрабатывают инициализацию и обновления на каждом кадре. Все ваши скрипты будут полагаться на этот PlayerLoop для создания игрового процесса. При профилировании вы увидите пользовательский код вашего проекта под PlayerLoop – с компонентами Editor под EditorLoop.

Важно понимать порядок выполнения FrameLoop Unity. Каждый скрипт Unity выполняет несколько функций событий в заранее определенном порядке. Узнайте разницу между Awake, Start, Update и другими функциями, которые создают жизненный цикл скрипта для повышения производительности.

Некоторые примеры включают использование FixedUpdate вместо Update при работе с Rigidbody или использование Awake вместо Start для инициализации переменных или состояния игры до начала игры. Используйте это, чтобы минимизировать код, который выполняется на каждом кадре. Awake вызывается только один раз в течение жизни экземпляра скрипта и всегда перед функциями Start. Это означает, что вы должны использовать Start для работы с объектами, о которых вы знаете, что они могут взаимодействовать с другими объектами или запрашивать их, когда они были инициализированы.

Смотрите диаграмму жизненного цикла скрипта для конкретного порядка выполнения функций событий.

Если у вашего проекта есть требовательные к производительности требования (например, игра с открытым миром), рассмотрите возможность создания пользовательского менеджера обновлений с использованием Update, LateUpdate или FixedUpdate.

Распространенный шаблон использования для Update или LateUpdate – это выполнение логики только при выполнении некоторого условия. Это может привести к множеству обратных вызовов на кадр, которые фактически не выполняют никакого кода, кроме проверки этого условия.

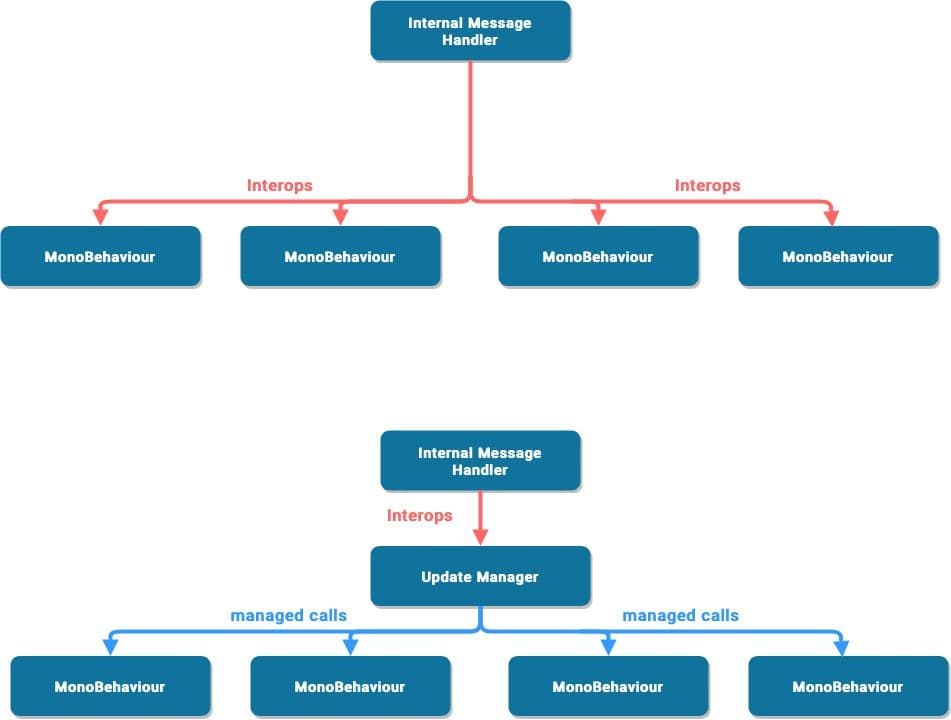

Когда Unity вызывает метод сообщения, такой как Update или LateUpdate, он делает вызов межпроцессного взаимодействия – то есть вызов со стороны C/C++ к управляемой стороне C#. Для небольшого количества объектов это не проблема. Когда у вас есть тысячи объектов, эта накладная работа начинает становиться значительной.

Подписывайте активные объекты на этот менеджер обновлений, когда им нужны обратные вызовы, и отписывайте, когда они не нужны. Этот шаблон может сократить множество вызовов межпроцессного взаимодействия к вашим Monobehaviour объектам.

Смотрите техники оптимизации, специфичные для игрового движка для примеров реализации.

Рассмотрите, нужно ли коду выполняться каждый кадр. Вы можете переместить ненужную логику из Update, LateUpdate и FixedUpdate. Эти функции событий Unity являются удобными местами для размещения кода, который должен обновляться каждый кадр, но вы можете извлечь любую логику, которая не нуждается в обновлении с такой частотой.

Выполняйте логику только тогда, когда что-то меняется. Не забудьте использовать такие техники, как паттерн наблюдателя в виде событий, чтобы вызвать конкретную сигнатуру функции.

Если вам нужно использовать Update, вы можете запускать код каждые n кадров. Это один из способов применения Time Slicing, распространенной техники распределения тяжелой нагрузки по нескольким кадрам.

В этом примере мы запускаем ExampleExpensiveFunction один раз каждые три кадра.

Секрет в том, чтобы чередовать это с другой работой, которая выполняется в другие кадры. В этом примере вы можете "запланировать" другие дорогие функции, когда Time.frameCount % interval == 1 или Time.frameCount % interval == 2.

В качестве альтернативы используйте класс пользовательского менеджера обновлений, чтобы обновлять подписанные объекты каждые n кадров.

В версиях Unity до 2020.2, GameObject.Find, GameObject.GetComponent и Camera.main могут быть дорогими, поэтому лучше избегать их вызова в методах Update.

Кроме того, старайтесь избегать размещения дорогих методов в OnEnable и OnDisable, если они вызываются часто. Частые вызовы этих методов могут способствовать всплескам ЦП.

По возможности запускайте дорогие функции, такие как MonoBehaviour.Awake и MonoBehaviour.Start в фазе инициализации. Кэшируйте необходимые ссылки и повторно используйте их позже. Посмотрите наш предыдущий раздел о Unity PlayerLoop для более подробного выполнения порядка скриптов.

Вот пример, который демонстрирует неэффективное использование повторного вызова GetComponent:

void Update()

{

Renderer myRenderer = GetComponent();

ExampleFunction(myRenderer);

}

Вместо этого вызывайте GetComponent только один раз, так как результат функции кэшируется. Кэшированный результат можно повторно использовать в Update без дополнительных вызовов GetComponent.

Читать больше о Порядке выполнения функций событий.

Логические операторы (особенно в Update, LateUpdate или FixedUpdate) могут замедлять производительность, поэтому отключите свои логические операторы перед созданием сборки. Чтобы сделать это быстро, рассмотрите возможность создания Условного атрибута вместе с директивой предварительной обработки.

Например, вы можете создать пользовательский класс, как показано ниже.

Сгенерируйте ваше лог-сообщение с помощью вашего пользовательского класса. Если вы отключите ENABLE_LOG препроцессор в Настройки игрока > Определить символы скрипта, все ваши логические операторы исчезнут разом.

Обработка строк и текста является распространенной причиной проблем с производительностью в проектах Unity. Вот почему удаление логических операторов и их дорогого форматирования строк может потенциально значительно повысить производительность.

Аналогично, пустые MonoBehaviour требуют ресурсов, поэтому вы должны удалить пустые методы Update или LateUpdate. Используйте директивы препроцессора, если вы используете эти методы для тестирования:

#if UNITY_EDITOR

void Update()

{

}

#endif

Здесь вы можете использовать Update в редакторе для тестирования без ненужных накладных расходов в вашей сборке.

Этот блог-пост о 10,000 вызовах Update объясняет, как Unity выполняет Monobehaviour.Update.

Используйте параметры Стек вызовов в Настройки игрока, чтобы контролировать, какие типы лог-сообщений появляются. Если ваше приложение регистрирует ошибки или предупреждающие сообщения в вашей сборке (например, для генерации отчетов о сбоях в дикой природе), отключите Стек вызовов, чтобы улучшить производительность.

Узнайте больше о Логировании стека вызовов.

Unity не использует строковые имена для обращения к Animator, Material или Shader свойствам внутри. Для скорости все имена свойств хэшируются в Property IDs, и эти ID используются для обращения к свойствам.

При использовании метода Set или Get на Animator, Material или Shader, используйте метод с целочисленным значением вместо методов со строковым значением. Методы со строковым значением выполняют хэширование строк и затем передают хэшированный ID методам с целочисленным значением.

Используйте Animator.StringToHash для имен свойств Animator и Shader.PropertyToID для имен свойств Material и Shader.

Связано с выбором структуры данных, что влияет на производительность, когда вы итерируете тысячи раз за кадр. Следуйте руководству MSDN по структурам данных в C# в качестве общего руководства для выбора правильной структуры.

Instantiate и Destroy могут вызывать всплески сборки мусора (GC). Это обычно медленный процесс, поэтому вместо регулярного создания и уничтожения GameObjects (например, стрельбы пулями из пистолета) используйте пулы предвыделенных объектов, которые можно повторно использовать и перерабатывать.

Создайте повторно используемые экземпляры в определенный момент игры, например, во время экрана меню или экрана загрузки, когда всплеск ЦП менее заметен. Отслеживайте этот "пул" объектов с помощью коллекции. Во время игрового процесса просто включайте следующий доступный экземпляр по мере необходимости и отключайте объекты вместо их уничтожения, прежде чем вернуть их в пул. Это снижает количество управляемых аллокаций в вашем проекте и может предотвратить проблемы с GC.

Аналогично, избегайте добавления компонентов во время выполнения; Вызов AddComponent имеет свои затраты. Unity должен проверять на дубликаты или другие необходимые компоненты каждый раз, когда добавляются компоненты во время выполнения. Создание Prefab с уже настроенными необходимыми компонентами является более производительным, поэтому используйте это в сочетании с вашим Object Pool.

Связано с этим, при перемещении Transforms используйте Transform.SetPositionAndRotation, чтобы обновить как позицию, так и вращение одновременно. Это избегает накладных расходов на изменение Transform дважды.

Если вам нужно создать GameObject во время выполнения, родительский и переместите его для оптимизации, смотрите ниже.

Для получения дополнительной информации о Object.Instantiate смотрите API для скриптов.

Узнайте, как создать простую систему пуллинга объектов в Unity здесь.

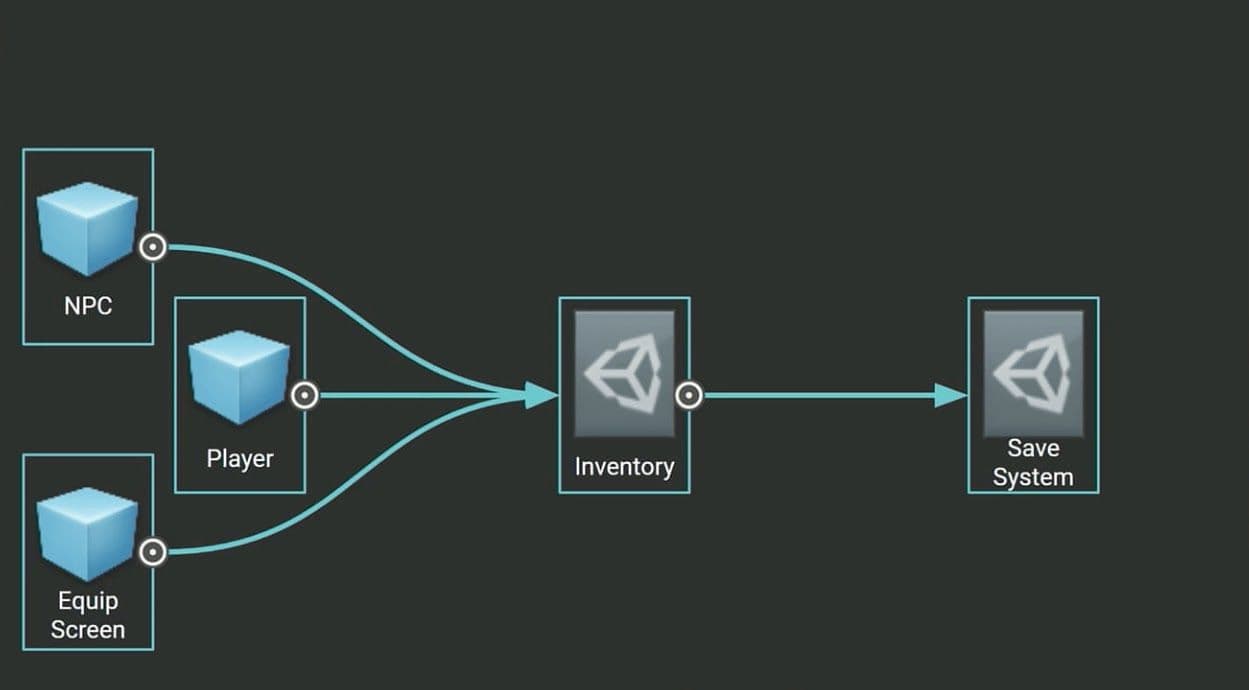

Храните неизменяемые значения или настройки в ScriptableObject вместо MonoBehaviour. ScriptableObject - это актив, который существует внутри проекта. Его нужно настроить только один раз, и его нельзя напрямую прикрепить к GameObject.

Создайте поля в ScriptableObject для хранения ваших значений или настроек, затем ссылайтесь на ScriptableObject в ваших MonoBehaviour. Использование полей из ScriptableObject может предотвратить ненужное дублирование данных каждый раз, когда вы создаете объект с этим MonoBehaviour.

Посмотрите этот вводный курс по ScriptableObjects и найдите соответствующую документацию здесь.

Одна из наших самых полных руководств когда-либо собирает более 80 практических советов о том, как оптимизировать ваши игры для ПК и консоли. Созданные нашими экспертами в области успеха и Accelerate Solutions, эти углубленные советы помогут вам максимально использовать Unity и повысить производительность вашей игры.