Publicações Unity

2022

Hex-Tiling prático em tempo real

Morten S. Mikkelsen - Jornal de Técnicas de Computação Gráfica (JCGT)

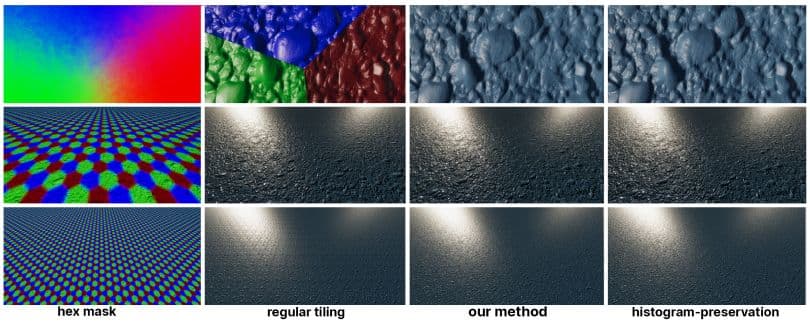

Para oferecer uma abordagem conveniente e fácil de adotar para texturas com mosaicos aleatórios no contexto de gráficos em tempo real, propomos uma adaptação do algoritmo de ruído por exemplo de Heitz e Neyret. O método original preserva o contraste usando um método de preservação de histograma que requer uma etapa de pré-computação para converter a textura de origem em uma textura de transformação e transformação inversa, ambas as quais devem ser amostradas no shader em vez da textura de origem original. Portanto, é necessária uma integração profunda com o aplicativo para que isso pareça opaco para o autor do sombreador e do material. Em nossa adaptação, omitimos a preservação do histograma e a substituímos por um novo método de mescla que nos permite obter uma amostra da textura da fonte original. Essa omissão é particularmente sensata para um mapa normal, pois ele representa as derivadas parciais de um mapa de altura. Para difundir a transição entre os blocos hexagonais, introduzimos uma métrica simples para ajustar os pesos de mistura. Para uma textura de cor, reduzimos a perda de contraste aplicando uma função de contraste diretamente aos pesos de mistura. Embora nosso método funcione para cores, enfatizamos o caso de uso de mapas normais em nosso trabalho porque o ruído não repetitivo é ideal para imitar os detalhes da superfície ao perturbar as normais.

ProtoRes: Arquitetura proto-residual para modelagem profunda da pose humana

Boris N. Oreshkin, Florent Bocquelet, Félix G. Harvey, Bay Raitt, Dominic Laflamme - ICLR 2022 (oral, 5% dos melhores trabalhos aceitos)

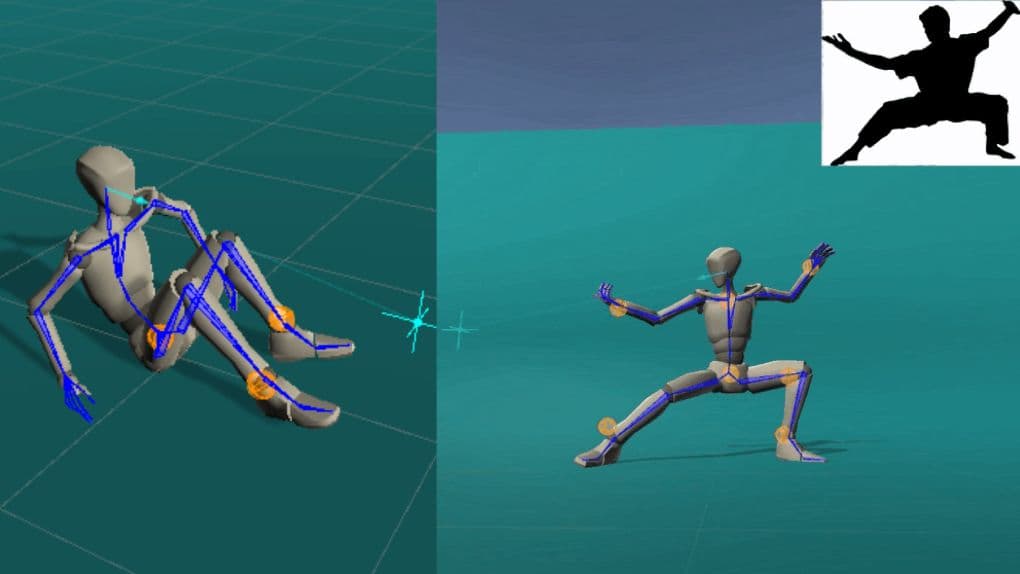

Nosso trabalho se concentra no desenvolvimento de uma representação neural aprendível da pose humana para ferramentas avançadas de animação assistida por IA. Especificamente, lidamos com o problema de construir uma pose humana estática completa com base em entradas esparsas e variáveis do usuário (por exemplo, locais e/ou orientações de um subconjunto de articulações do corpo). Para resolver esse problema, propomos uma nova arquitetura neural que combina conexões residuais com codificação de protótipo de uma pose parcialmente especificada para criar uma nova pose completa a partir do espaço latente aprendido. Mostramos que nossa arquitetura supera o desempenho de uma linha de base baseada no Transformer, tanto em termos de precisão quanto de eficiência computacional. Além disso, desenvolvemos uma interface de usuário para integrar nosso modelo neural no Unity, uma plataforma de desenvolvimento 3D em tempo real. Além disso, apresentamos dois novos conjuntos de dados que representam o problema de modelagem de pose humana estática, com base em dados de captura de movimento humano de alta qualidade, que serão divulgados publicamente junto com o código do modelo.

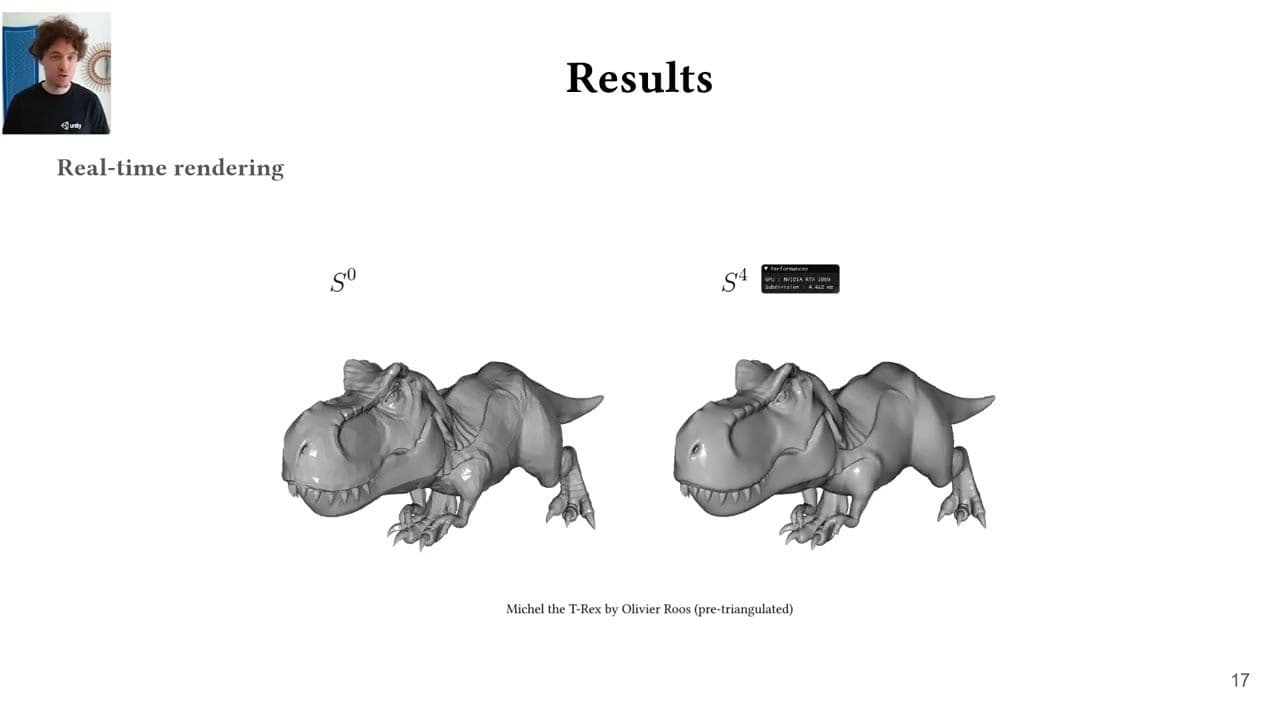

Htex: Texturização por meia borda para topologias de malha arbitrária

Wilhem Barbier, Jonathan Dupuy - HPG 2022

Apresentamos a texturização por meia borda (Htex), um método amigável à GPU para texturizar malhas poligonais arbitrárias sem uma parametrização explícita. O Htex baseia-se na percepção de que as meias-arestas codificam uma triangulação intrínseca para malhas de polígonos, em que cada meia-aresta abrange um único triângulo com informações de adjacência direta. Em vez de armazenar uma textura separada por face da malha de entrada, como é feito pelos métodos anteriores de texturização sem parametrização, o Htex armazena uma textura quadrada para cada meia borda e seu gêmeo. Mostramos que essa simples mudança de face para meia borda induz duas propriedades importantes para a texturização sem parametrização de alto desempenho. Primeiro, o Htex suporta nativamente polígonos arbitrários sem exigir código dedicado para, por exemplo, faces não quadradas. Em segundo lugar, o Htex leva a uma implementação de GPU direta e eficiente que usa apenas três buscas de textura por meia borda para produzir uma texturização contínua em toda a malha. Demonstramos a eficácia do Htex renderizando ativos de produção em tempo real.

Um paradigma orientado por dados para transferência de radiância pré-calculada

Laurent Belcour, Thomas Deliot, Wilhem Barbier, Cyril Soler - HPG 2022

Neste trabalho, exploramos uma mudança de paradigma para criar métodos de Transferência de Radiância Pré-Computada (PRT) de uma forma orientada por dados. Essa mudança de paradigma nos permite aliviar as dificuldades de criar métodos PRT tradicionais, como definir uma base de reconstrução, codificar um rastreador de caminho dedicado para calcular uma função de transferência, etc. Nosso objetivo é preparar o caminho para os métodos de aprendizado de máquina, fornecendo um algoritmo de linha de base simples. Mais especificamente, demonstramos a renderização em tempo real da iluminação indireta em cabelos e superfícies a partir de algumas medições de iluminação direta. Criamos nossa linha de base a partir de pares de renderizações de iluminação direta e indireta usando apenas ferramentas padrão, como a Decomposição de Valor Singular (SVD), para extrair a base de reconstrução e a função de transferência.

Uma regra de refinamento de meia borda para subdivisão de loops paralelos

Laurent Belcour, Thomas Deliot, Wilhem Barbier, Cyril Soler - HPG 2022

Neste trabalho, exploramos uma mudança de paradigma para criar métodos de Transferência de Radiância Pré-Computada (PRT) de uma forma orientada por dados. Essa mudança de paradigma nos permite aliviar as dificuldades de criar métodos PRT tradicionais, como definir uma base de reconstrução, codificar um rastreador de caminho dedicado para calcular uma função de transferência, etc. Nosso objetivo é preparar o caminho para os métodos de aprendizado de máquina, fornecendo um algoritmo de linha de base simples. Mais especificamente, demonstramos a renderização em tempo real da iluminação indireta em cabelos e superfícies a partir de algumas medições de iluminação direta. Criamos nossa linha de base a partir de pares de renderizações de iluminação direta e indireta usando apenas ferramentas padrão, como a Decomposição de Valor Singular (SVD), para extrair a base de reconstrução e a função de transferência.

Trazendo cossenos transformados linearmente para o GGX anisotrópico

Aakash KT, Eric Heitz, Jonathan Dupuy, P.J. Narayanan - I3D 2022

Os cossenos transformados linearmente (LTCs) são uma família de distribuições usadas para sombreamento de luz de área em tempo real graças às suas propriedades de integração analítica. Os mecanismos de jogos modernos usam uma aproximação LTC do modelo GGX onipresente, mas atualmente essa aproximação só existe para GGX isotrópico e, portanto, não há suporte para GGX anisotrópico. Embora a maior dimensionalidade represente um desafio por si só, mostramos que surgem vários problemas adicionais ao ajustar, pós-processar, armazenar e interpolar LTCs no caso anisotrópico. Cada uma dessas operações deve ser feita com cuidado para evitar artefatos de renderização. Encontramos soluções robustas para cada operação, introduzindo e explorando as propriedades de invariância dos LTCs. Como resultado, obtemos uma pequena tabela de consulta de 8^4 que fornece uma aproximação LTC plausível e sem artefatos para o GGX anisotrópico e o leva para o sombreamento de luz de área em tempo real.

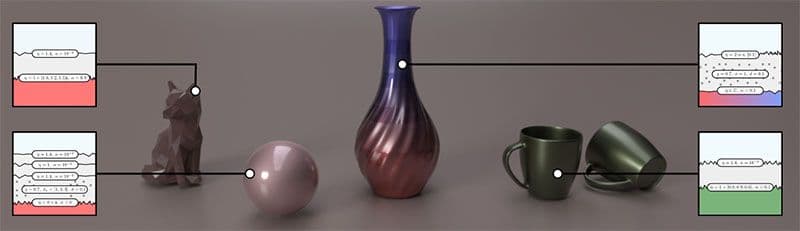

Renderização de materiais em camadas com interfaces difusas

Heloise de Dinechin, Laurent Belcour - I3D 2022

Neste trabalho, apresentamos um novo método para renderizar, em tempo real, superfícies Lambertianas com um revestimento dielétrico áspero. Mostramos que a aparência de tais configurações é fielmente representada com dois lóbulos de microfacetas que representam interações diretas e indiretas, respectivamente. Ajustamos numericamente esses lóbulos com base nas estatísticas direcionais de primeira ordem (energia, média e variação) do transporte de luz usando tabelas 5D e as reduzimos a 2D + 1D com formas analíticas e redução de dimensão. Demonstramos a qualidade de nosso método ao renderizar com eficiência plásticos e cerâmicas ásperos, com uma correspondência muito próxima da verdade terrestre. Além disso, aprimoramos um modelo de material em camadas de última geração para incluir interfaces Lambertianas.

2021-2019

FC-GAGA: Arquitetura de gráfico fechado totalmente conectado para previsão de tráfego espaço-temporal

Boris N. Oreshkin, Arezou Amini, Lucy Coyle, Mark J. Coates (AAAI 2021)

A previsão de séries temporais multivariadas é um problema importante que tem aplicações em gerenciamento de tráfego, configuração de rede celular e finanças quantitativas. Um caso especial do problema surge quando há um gráfico disponível que captura as relações entre as séries temporais. Neste artigo, propomos uma nova arquitetura de aprendizado que alcança um desempenho competitivo ou melhor do que os melhores algoritmos existentes, sem exigir conhecimento do gráfico. O principal elemento de nossa arquitetura proposta é o mecanismo de gating do gráfico rígido totalmente conectado e passível de aprendizado, que permite o uso da arquitetura de previsão de séries temporais totalmente conectadas de última geração e altamente eficiente do ponto de vista computacional em aplicativos de previsão de tráfego. Os resultados experimentais de dois conjuntos de dados de redes de tráfego público ilustram o valor de nossa abordagem, e os estudos de ablação confirmam a importância de cada elemento da arquitetura.

Uma perda de Wasserstein fatiada para síntese de textura neural

Eric Heitz, Kenneth Vanhoey, Thomas Chambon, Laurent Belcour - Para publicação na CVPR 2021

Abordamos o problema de calcular uma perda de textura com base nas estatísticas extraídas das ativações de recursos de uma rede neural convolucional otimizada para reconhecimento de objetos (por exemplo, VGG-19). O problema matemático subjacente é a medida da distância entre duas distribuições no espaço de recursos. A perda da matriz de Gram é a aproximação onipresente para esse problema, mas está sujeita a várias deficiências. Nosso objetivo é promover o Sliced Wasserstein Distance como um substituto para ele. Ele é teoricamente comprovado, prático, simples de implementar e alcança resultados visualmente superiores para a síntese de textura por meio da otimização ou do treinamento de redes neurais generativas.

Nível de detalhe aprimorado do sombreador e da textura usando cones de raio

Tomas Akenine-Möller, Cyril Crassin, Jakub Boksansky, Laurent Belcour, Alexey Panteleev, Oli Wright - Publicado no Journal of Computer Graphics Techniques (JCGT)

No traçado de raios em tempo real, a filtragem de textura é uma técnica importante para aumentar a qualidade da imagem. Os jogos atuais, como o Minecraft com RTX no Windows 10, usam cones de raios para determinar as pegadas de filtragem de textura. Neste documento, apresentamos vários aprimoramentos no algoritmo de cones de raio que melhoram a qualidade e o desempenho da imagem e facilitam sua adoção em mecanismos de jogos. Mostramos que o tempo total por quadro pode diminuir em cerca de 10% em um rastreador de caminho baseado em GPU e fornecemos uma implementação de domínio público.

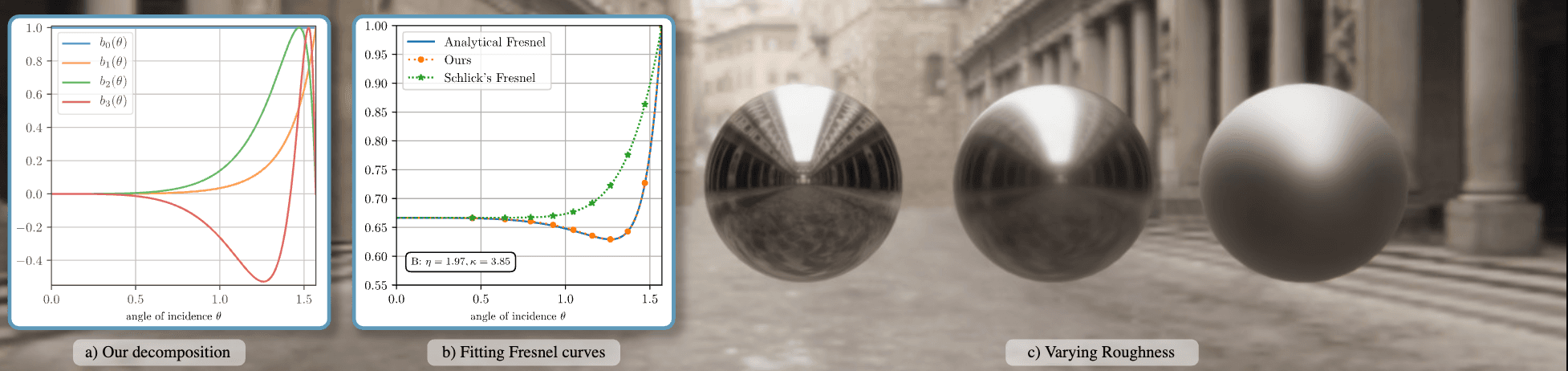

Trazendo um Fresnel preciso para a renderização em tempo real: uma decomposição pré-integrável

Laurent Belcour, Megane Bati, Pascal Barla - Publicado em ACM SIGGRAPH 2020 Talks and Courses

Apresentamos um novo modelo aproximado de refletância de Fresnel que permite a reprodução precisa da refletância real em mecanismos de renderização em tempo real. Nosso método baseia-se em uma decomposição empírica do espaço de possíveis curvas de Fresnel. Ele é compatível com a pré-integração de iluminação baseada em imagem e luzes de área usadas em mecanismos em tempo real. Nosso trabalho permite o uso de uma parametrização de refletância [Gulbrandsen 2014] que antes era restrita à renderização off-line.

Árvores binárias simultâneas

Jonathan Dupuy - HPG 2020

Apresentamos a árvore binária simultânea (CBT), uma nova representação simultânea para criar e atualizar árvores binárias arbitrárias em paralelo. Fundamentalmente, nossa representação consiste em um heap binário, ou seja, uma matriz 1D, que armazena explicitamente a árvore de redução de soma de um campo de bits. Nesse campo de bits, cada bit de valor único representa um nó de folha da árvore binária codificada pelo CBT, que localizamos algoritmicamente usando uma pesquisa binária sobre a redução de soma. Mostramos que essa construção permite despachar até um thread por nó folha e que, por sua vez, esses threads podem dividir e/ou remover nós com segurança simultaneamente por meio de operações simples de bit a bit sobre o campo de bits. O benefício prático dos CBTs está em sua capacidade de acelerar algoritmos baseados em árvores binárias com processadores paralelos. Para apoiar essa afirmação, utilizamos nossa representação para acelerar um algoritmo baseado na bissecção da borda mais longa que calcula e renderiza a geometria adaptável para terrenos de grande escala inteiramente na GPU. Para esse algoritmo específico, o CBT acelera a velocidade de processamento linearmente com o número de processadores.

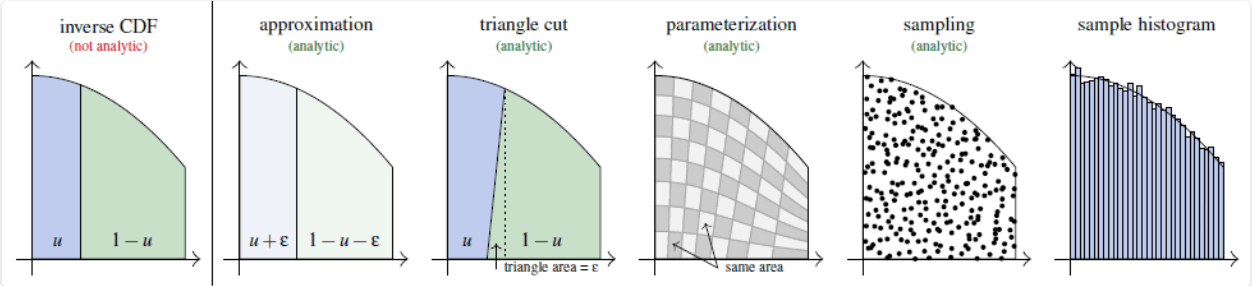

Não é possível inverter o CDF? A parametrização de corte triangular da região sob a curva

Eric Heitz - EGSR 2020

Apresentamos um método exato, analítico e determinístico para a amostragem de densidades cujas Funções de Distribuição Cumulativa (CDFs) não podem ser invertidas analiticamente. De fato, o método CDF inverso é geralmente considerado o caminho a seguir para a amostragem de densidades não uniformes. Se o CDF não for analiticamente invertível, as soluções alternativas típicas são aproximadas, numéricas ou não determinísticas, como a aceitação-rejeição. Para superar esse problema, mostramos como calcular uma parametrização analítica que preserva a área da região sob a curva da densidade alvo. Nós o usamos para gerar pontos aleatórios uniformemente distribuídos sob a curva da densidade alvo e suas abscissas são, portanto, distribuídas com a densidade alvo. Tecnicamente, nossa ideia é usar uma parametrização analítica aproximada cujo erro possa ser representado geometricamente como um triângulo simples de cortar. Essa parametrização de corte triangular produz soluções exatas e analíticas para problemas de amostragem que, presumivelmente, não podiam ser resolvidos analiticamente.

Renderização de materiais em camadas com interfaces anisotrópicas

Philippe Weier, Laurent Belcour - Publicado no Journal of Computer Graphics Techniques (JCGT)

Apresentamos um método leve e eficiente para renderizar materiais em camadas com interfaces anisotrópicas. Nosso trabalho amplia nossa estrutura estatística publicada anteriormente para lidar com modelos de microfacetas anisotrópicas. Um insight importante do nosso trabalho é que, quando projetados no plano tangente, os lóbulos BRDF de uma distribuição GGX anisotrópica são bem aproximados por distribuições elipsoidais alinhadas com o quadro tangente: sua matriz de covariância é diagonal nesse espaço. Aproveitamos essa propriedade e executamos o algoritmo isotrópico em camadas em cada eixo de anisotropia de forma independente. Além disso, atualizamos o mapeamento da rugosidade para a variação direcional e a avaliação da refletância média para levar em conta a anisotropia.

Integração e simulação de distribuições bivariadas projetivas-Cauchy em domínios poligonais arbitrários

Jonathan Dupuy, Laurent Belcour & Eric Heitz - Technical Report 2019

Considere uma variação uniforme na metade superior da esfera unitária de dimensão d. Sabe-se que a projeção em linha reta através do centro da esfera unitária no plano acima dela distribui essa variável de acordo com uma distribuição projetiva-Cauchy d-dimensional. Neste trabalho, aproveitamos a geometria dessa construção na dimensão d=2 para derivar novas propriedades para a distribuição bivariada projetiva-Cauchy. Especificamente, revelamos, por meio de intuições geométricas, que a integração e a simulação de uma distribuição bivariada projetiva-Cauchy em um domínio arbitrário se traduzem, respectivamente, na medição e na amostragem do ângulo sólido subtendido pela geometria desse domínio, conforme visto da origem da esfera unitária. Para tornar esse resultado prático para, por exemplo, gerar variantes truncadas da distribuição bivariada projetiva-Cauchy, nós o estendemos em dois aspectos. Primeiro, fornecemos uma generalização para as distribuições de Cauchy parametrizadas por coeficientes de correlação de escala de local. Em segundo lugar, fornecemos uma especialização para domínios poligonais, o que leva a expressões de forma fechada. Fornecemos uma implementação completa do MATLAB para o caso de domínios triangulares e discutimos brevemente o caso de domínios elípticos e como estender nossos resultados para distribuições bivariadas de Student.

Estrutura de mapeamento de relevo baseada em gradiente de superfície

Morten Mikkelsen 2020

Neste artigo, é proposta uma nova estrutura para camadas/composição de mapas de relevo/normais, incluindo suporte para vários conjuntos de coordenadas de textura, bem como coordenadas de textura e geometria geradas processualmente. Além disso, oferecemos suporte e integração adequados para mapas de relevo definidos em um volume, como projetores de decalque, projeção triplanar e funções baseadas em ruído.

Multi-estilização de videogames em tempo real guiada por informações do G-buffer

Adèle Saint-Denis, Kenneth Vanhoey, Thomas Deliot HPG 2019

Investigamos como tirar proveito das modernas técnicas de transferência de estilo neural para modificar o estilo dos videogames em tempo de execução. As redes neurais de transferência de estilo recentes são pré-treinadas e permitem a transferência rápida de qualquer estilo em tempo de execução. No entanto, um único estilo é aplicado globalmente, em toda a imagem, enquanto gostaríamos de oferecer ferramentas de criação mais refinadas ao usuário. Neste trabalho, permitimos que o usuário atribua estilos (por meio de uma imagem de estilo) a várias quantidades físicas encontradas no buffer G de um pipeline de renderização diferida, como profundidade, normais ou ID de objeto. Nosso algoritmo interpola esses estilos suavemente de acordo com a cena a ser renderizada: por exemplo, um estilo diferente surge para diferentes objetos, profundidades ou orientações.

2019-2018

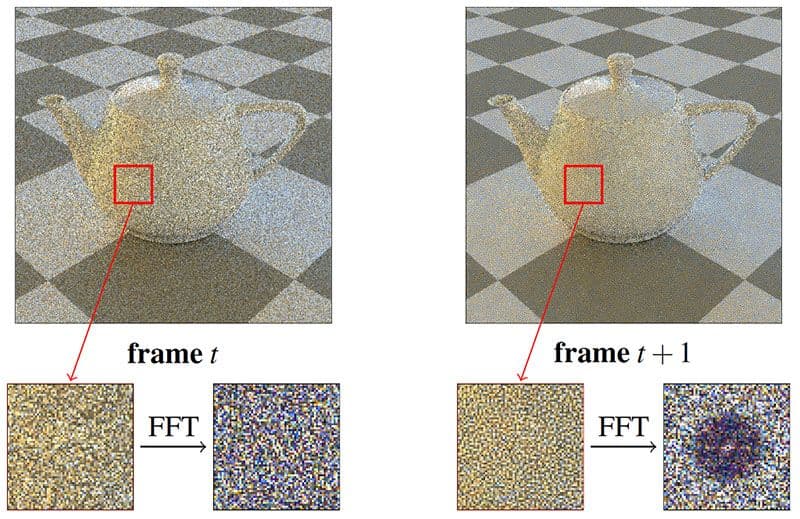

Distribuição de erros de Monte Carlo como um ruído azul no espaço da tela por meio da permutação de sementes de pixel entre quadros

Eric Heitz, Laurent Belcour — EGSR 2019

Apresentamos um amostrador que gera amostras por pixel com alta qualidade visual graças a duas propriedades importantes relacionadas aos erros de Monte Carlo que ele produz. Em primeiro lugar, a sequência de cada pixel é uma sequência Sobol com crosta de Owen que tem propriedades de convergência de última geração. Os erros de Monte Carlo têm, portanto, magnitudes baixas. Em segundo lugar, esses erros são distribuídos como um ruído azul no espaço da tela. Isso os torna visualmente ainda mais aceitáveis. Nosso sampler é leve e rápido. Nós o implementamos com uma pequena textura e duas operações xor. Nosso material suplementar fornece comparações com trabalhos anteriores para diferentes cenas e contagens de amostras.

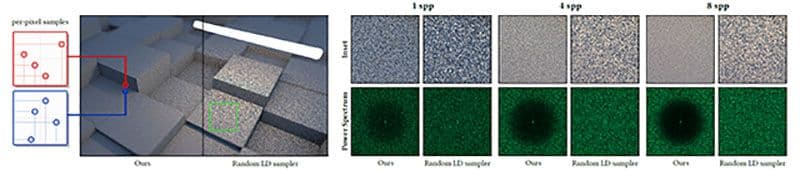

Um amostrador de baixa discrepância que distribui os erros de Monte Carlo como um ruído azul no espaço da tela

Eric Heitz, Laurent Belcour — ACM SIGGRAPH Talk 2019

Apresentamos um amostrador que gera amostras por pixel com alta qualidade visual graças a duas propriedades importantes relacionadas aos erros de Monte Carlo que ele produz. Em primeiro lugar, a sequência de cada pixel é uma sequência Sobol com crosta de Owen que tem propriedades de convergência de última geração. Os erros de Monte Carlo têm, portanto, magnitudes baixas. Em segundo lugar, esses erros são distribuídos como um ruído azul no espaço da tela. Isso os torna visualmente ainda mais aceitáveis. Nosso amostrador é leve e rápido. Nós o implementamos com uma pequena textura e duas operações xor. Nosso material suplementar fornece comparações com trabalhos anteriores para diferentes cenas e contagens de amostras.

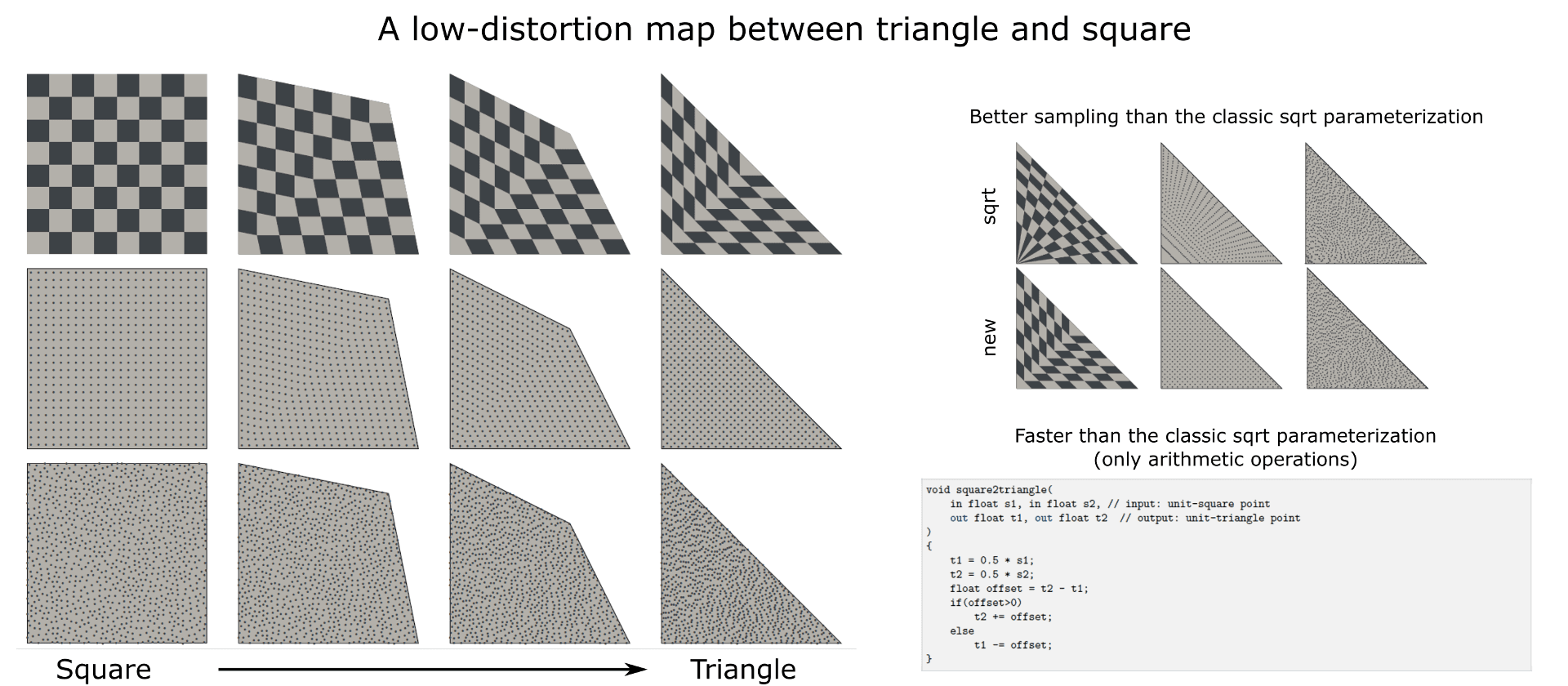

Um mapa de baixa distorção entre triângulo e quadrado

Eric Heitz — Tech Report 2019

Apresentamos um mapa de baixa distorção entre triângulo e quadrado. Esse mapeamento produz uma parametrização de preservação de área que pode ser usada para a amostragem de pontos aleatórios com uma densidade uniforme em triângulos arbitrários. Essa parametrização apresenta duas vantagens em comparação com a parametrização de raiz quadrada normalmente usada para amostragem triangular. Primeiro, ele apresenta menos distorções e preserva melhor as propriedades de ruído azul das amostras de entrada. Em segundo lugar, seu cálculo se baseia apenas em operações aritméticas (+, *), o que torna sua avaliação mais rápida.

Amostragem da distribuição GGX de normais visíveis

Eric Heitz - JCGT 2018

A amostragem de importância de BSDFs de microfacetas usando sua distribuição de normais visíveis (VNDF) produz uma redução significativa da variação na renderização de Monte Carlo. Neste artigo, descrevemos uma rotina de amostragem eficiente e exata para o VNDF da distribuição de microfacetas GGX. Essa rotina aproveita a propriedade de que o GGX é a distribuição de normais de um elipsoide truncado e a amostragem do GGX VNDF é equivalente à amostragem da projeção 2D desse elipsoide truncado. Para isso, simplificamos o problema usando a transformação linear que mapeia o elipsoide truncado em um hemisfério. Como as transformações lineares preservam a uniformidade das áreas projetadas, a amostragem na configuração do hemisfério e a transformação das amostras de volta para a configuração do elipsoide produzem amostras válidas do GGX VNDF.

Cálculo analítico do ângulo sólido subtendido por um elipsoide posicionado arbitrariamente em relação a uma fonte pontual

Eric Heitz - Instrumentos e métodos nucleares em pesquisa física 2018

Apresentamos um método geométrico para calcular uma elipse que subtende o mesmo domínio de ângulo sólido que um elipsoide posicionado arbitrariamente. Com esse método, podemos estender os cálculos analíticos existentes de ângulo sólido de elipses para elipsoides. Nossa ideia consiste em aplicar uma transformação linear no elipsoide de modo que ele seja transformado em uma esfera a partir da qual um disco que cubra o mesmo domínio de ângulo sólido possa ser calculado. Demonstramos que, ao aplicar a transformação linear inversa nesse disco, obtemos uma elipse que subtende o mesmo domínio de ângulo sólido que o elipsoide. Fornecemos uma implementação em MATLAB do nosso algoritmo e o validamos numericamente.

Uma observação sobre amostragem de comprimento de trilha com distribuições não exponenciais

Eric Heitz, Laurent Belcour - Tech Report 2018

A amostragem de comprimento de trilha é o processo de amostragem de intervalos aleatórios de acordo com uma distribuição de distância. Isso significa que, em vez de amostrar uma distância pontual da distribuição de distâncias, a amostragem de comprimento de trilha gera um intervalo de distâncias possíveis. O processo de amostragem de comprimento de trilha estará correto se a expectativa dos intervalos for a distribuição de distâncias de destino. Em outras palavras, a média de todos os intervalos amostrados deve convergir para a distribuição de distância à medida que seu número aumenta. Nesta nota, enfatizamos que a distribuição de distância usada para amostrar distâncias pontuais e a distribuição de comprimento de trilha usada para amostrar intervalos não são as mesmas em geral. Essa diferença pode ser surpreendente porque, até onde sabemos, a amostragem do comprimento da trilha foi estudada principalmente no contexto da teoria do transporte, em que a distribuição da distância é normalmente exponencial: nesse caso especial, a distribuição da distância e a distribuição do comprimento da trilha são ambas a mesma distribuição exponencial. No entanto, eles não são os mesmos em geral quando a distribuição de distância é não exponencial.

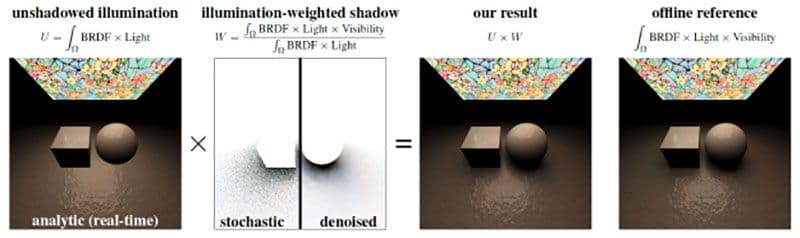

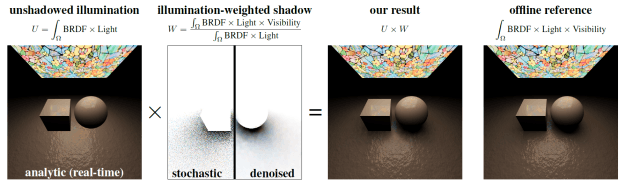

Combinação de iluminação direta analítica e sombras estocásticas

Eric Heitz, Stephen Hill (Lucasfilm), Morgan McGuire (NVIDIA) - I3D 2018 (artigo curto) (Prêmio de Melhor Apresentação de Artigo)

Neste artigo, propomos um estimador de proporção da equação de iluminação direta que nos permite combinar técnicas de iluminação analítica com sombras estocásticas com traçado de raios, mantendo a correção. Nossa principal contribuição é mostrar que a iluminação sombreada pode ser dividida no produto da iluminação não sombreada e da sombra ponderada pela iluminação. Esses termos podem ser calculados separadamente, possivelmente usando técnicas diferentes, sem afetar a exatidão do resultado final dado pelo seu produto. Essa formulação amplia a utilidade das técnicas de iluminação analítica para aplicativos de traçado de raios, onde até então eram evitadas por não incorporarem sombras. Usamos esses métodos para obter sombreamento nítido e sem ruído na imagem de iluminação sem sombra e calculamos a imagem de sombra ponderada com raytracing estocástico. A vantagem de restringir a avaliação estocástica à imagem de sombra ponderada é que o resultado final exibe ruído somente nas sombras. Além disso, fazemos a redução do ruído das sombras separadamente da iluminação, de modo que até mesmo a redução agressiva do ruído apenas ofusca as sombras, enquanto os detalhes de sombreamento de alta frequência (texturas, mapas normais etc.) são preservados.

Ladrilho não periódico de funções de ruído procedimental

Aleksandr Kirillov - HPG 2018

As funções de ruído procedimental têm muitas aplicações em computação gráfica, desde a síntese de textura até a simulação de efeitos atmosféricos ou a especificação da geometria da paisagem. O ruído pode ser pré-computado e armazenado em uma textura ou avaliado diretamente no tempo de execução do aplicativo. Essa opção oferece uma compensação entre a variação da imagem, o consumo de memória e o desempenho.

Algoritmos avançados de ladrilhos podem ser usados para diminuir a repetição visual. Os ladrilhos Wang permitem que um plano seja ladrilhado de forma não periódica, usando um conjunto relativamente pequeno de texturas. Os blocos podem ser organizados em um único mapa de textura para permitir que a GPU use a filtragem de hardware.

Neste artigo, apresentamos modificações em várias funções populares de ruído processual que produzem diretamente mapas de textura contendo o menor conjunto completo de blocos de Wang. As descobertas apresentadas neste artigo possibilitam a disposição não periódica dessas funções de ruído e texturas com base nelas, tanto em tempo de execução quanto como uma etapa de pré-processamento. Essas descobertas também permitem diminuir a repetição de efeitos baseados em ruído em imagens geradas por computador com um pequeno custo de desempenho, mantendo ou até mesmo reduzindo o consumo de memória.

Ruído por exemplo de alto desempenho usando um operador de mistura com preservação de histograma

Eric Heitz, Fabrice Neyret (Inria) - HPG 2018 (Prêmio de Melhor Artigo)

Propomos um novo algoritmo de ruído por exemplo que recebe como entrada um pequeno exemplo de uma textura estocástica e sintetiza uma saída infinita com a mesma aparência. Ele funciona em qualquer tipo de entrada de fase aleatória, bem como em muitas entradas de fase não aleatória que são estocásticas e não periódicas, normalmente texturas naturais como musgo, granito, areia, casca de árvore, etc. Nosso algoritmo alcança resultados de alta qualidade comparáveis às técnicas de ruído processual de última geração, mas é mais de 20 vezes mais rápido.

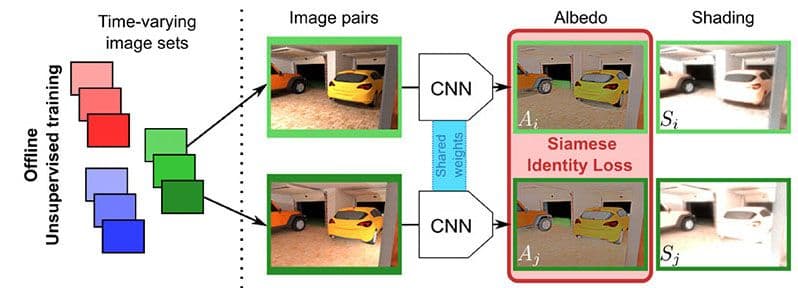

Decomposição intrínseca profunda e não supervisionada de uma única imagem usando sequências de imagens com variação de iluminação

Louis Lettry (ETH Zürich), Kenneth Vanhoey, Luc Van Gool (ETH Zürich) - Pacific Graphics 2018 / Fórum de Computação Gráfica

A decomposição intrínseca decompõe uma cena fotografada em albedo e sombreamento. A remoção do sombreamento permite "encantar" as imagens, que podem ser reutilizadas em cenas virtualmente iluminadas. Propomos um método de aprendizado não supervisionado para resolver esse problema.

Técnicas recentes usam aprendizado supervisionado: elas exigem um grande conjunto de decomposições conhecidas, que são difíceis de obter. Em vez disso, treinamos em imagens não anotadas usando imagens de lapso de tempo obtidas de webcams estáticas. Exploramos a suposição de que o albedo é estático por definição, e o sombreamento varia de acordo com a iluminação. Transcrevemos isso em um treinamento siamês para aprendizagem profunda.

2018-2016

Renderização eficiente de materiais em camadas usando uma decomposição atômica com operadores estatísticos

Laurent Belcour - ACM SIGGRAPH 2018

Criamos uma nova estrutura para a análise e o cálculo eficientes do transporte de luz em materiais em camadas. Nossa derivação consiste em duas etapas. Primeiro, decompomos o transporte de luz em um conjunto de operadores atômicos que atuam em suas estatísticas direcionais. Especificamente, nossos operadores consistem em reflexão, refração, dispersão e absorção, cujas combinações são suficientes para descrever as estatísticas de dispersão de luz várias vezes em estruturas em camadas. Mostramos que os três primeiros momentos direcionais (energia, média e variação) já fornecem um resumo preciso. Em segundo lugar, estendemos o método de adição e duplicação para suportar combinações arbitrárias de tais operadores de forma eficiente. Durante o sombreamento, mapeamos os momentos direcionais para lóbulos BSDF. Validamos que o BSDF resultante se aproxima da verdade terrestre em um formato leve e eficiente. Diferentemente dos métodos anteriores, oferecemos suporte a um número arbitrário de camadas texturizadas e demonstramos uma renderização prática e precisa de materiais em camadas com uma implementação off-line e em tempo real que não requer pré-computação por material.

Uma parametrização adaptativa para aquisição e renderização de materiais

Jonathan Dupuy e Wenzel Jakob (EPFL) - ACM SIGGRAPH Asia 2018

Um dos principais ingredientes de qualquer sistema de renderização baseado em física é uma especificação detalhada que caracteriza a interação da luz e da matéria de todos os materiais presentes em uma cena, normalmente por meio da Função de Distribuição de Refletância Bidirecional (BRDF). Apesar de sua utilidade, o acesso aos conjuntos de dados BRDF do mundo real continua limitado: isso ocorre porque as medições envolvem a varredura de um domínio quadridimensional com resolução suficiente, um processo tedioso e, muitas vezes, inviável e demorado. Propomos uma nova parametrização que se adapta automaticamente ao comportamento de um material, distorcendo o domínio 4D subjacente de modo que a maior parte do volume seja mapeada para regiões em que o BRDF assume valores não negligenciáveis, enquanto as regiões irrelevantes são fortemente comprimidas. Essa adaptação requer apenas uma breve medição 1D ou 2D das propriedades retrorrefletivas do material. Nossa parametrização é unificada no sentido de que combina várias etapas que anteriormente exigiam conversões de dados intermediários: o mesmo mapeamento pode ser usado simultaneamente para aquisição e armazenamento de BRDF e oferece suporte à geração eficiente de amostras de Monte Carlo.

Sombras estocásticas

Eric Heitz, Stephen Hill (Lucasfilm), Morgan McGuire (NVIDIA)

Neste artigo, propomos um estimador de proporção da equação de iluminação direta que nos permite combinar técnicas de iluminação analítica com sombras estocásticas com traçado de raios, mantendo a correção. Nossa principal contribuição é mostrar que a iluminação sombreada pode ser dividida no produto da iluminação não sombreada e da sombra ponderada pela iluminação. Esses termos podem ser calculados separadamente, possivelmente usando técnicas diferentes, sem afetar a exatidão do resultado final dado pelo seu produto.

Essa formulação amplia a utilidade das técnicas de iluminação analítica para aplicativos de traçado de raios, onde até então eram evitadas por não incorporarem sombras. Usamos esses métodos para obter sombreamento nítido e sem ruído na imagem de iluminação sem sombra e calculamos a imagem de sombra ponderada com raytracing estocástico. A vantagem de restringir a avaliação estocástica à imagem de sombra ponderada é que o resultado final exibe ruído somente nas sombras. Além disso, fazemos a redução do ruído das sombras separadamente da iluminação, de modo que até mesmo a redução agressiva do ruído apenas ofusca as sombras, enquanto os detalhes de sombreamento de alta frequência (texturas, mapas normais etc.) são preservados.

Tesselação adaptativa de GPU com sombreadores de computação

Jad Khoury, Jonathan Dupuy e Christophe Riccio - GPU Zen 2

Os rasterizadores de GPU são mais eficientes quando as primitivas se projetam em mais de alguns pixels. Abaixo desse limite, o buffer Z começa a apresentar aliasing, e a taxa de sombreamento diminui drasticamente [Riccio 12]; isso torna a renderização de cenas geometricamente complexas um desafio, pois qualquer polígono moderadamente distante se projetará para o tamanho de subpixel. Para minimizar essas projeções de subpixel, uma solução simples consiste em refinar processualmente as malhas grosseiras à medida que elas se aproximam da câmera. Neste capítulo, estamos interessados em derivar essa técnica de refinamento processual para malhas de polígonos arbitrários.

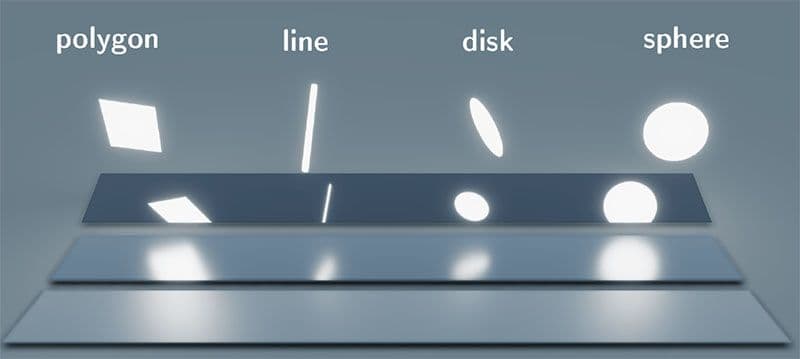

Sombreamento de luz de linha e de disco em tempo real com cossenos transformados linearmente

Eric Heitz (Unity Technologies) e Stephen Hill (Lucasfilm) - Cursos ACM SIGGRAPH 2017

Recentemente, apresentamos uma nova técnica de sombreamento de luz de área em tempo real dedicada a luzes com formas poligonais. Nesta palestra, estendemos essa estrutura de iluminação de área para suportar luzes em forma de linhas, esferas e discos, além de polígonos.

Mapeamento normal baseado em microfacetas para rastreamento robusto de caminhos de Monte Carlo

Vincent Schüssler (KIT), Eric Heitz (Unity Technologies), Johannes Hanika (KIT) e Carsten Dachsbacher (KIT) - ACM SIGGRAPH ASIA 2017

O mapeamento normal imita detalhes visuais em superfícies usando normais de sombreamento falsas. No entanto, o modelo de superfície resultante é geometricamente impossível e, portanto, o mapeamento normal é frequentemente considerado uma abordagem fundamentalmente falha, com problemas inevitáveis para o rastreamento de caminho de Monte Carlo: ele quebra a aparência (franjas pretas, perda de energia) ou o integrador (transporte de luz diferente para frente e para trás). Neste artigo, apresentamos o mapeamento normal baseado em microfacetas, uma forma alternativa de falsificar detalhes geométricos sem corromper a robustez do rastreamento de caminhos de Monte Carlo, de modo que esses problemas não ocorram.

Uma parametrização de preservação de calota esférica para distribuições esféricas

Jonathan Dupuy, Eric Heitz e Laurent Belcour - ACM SIGGRAPH 2017

Apresentamos uma nova parametrização para distribuições esféricas que se baseia em um ponto localizado dentro da esfera, que chamamos de pivô. O pivô serve como o centro de uma projeção de linha reta que mapeia ângulos sólidos no lado oposto da esfera. Ao transformar as distribuições esféricas dessa forma, derivamos novas distribuições esféricas paramétricas que podem ser avaliadas e ter uma amostragem importante das distribuições originais usando expressões simples e de forma fechada. Além disso, provamos que, se a distribuição original puder ser amostrada e/ou integrada em uma tampa esférica, a distribuição transformada também poderá. Exploramos as propriedades de nossa parametrização para derivar técnicas eficientes de iluminação esférica para renderização em tempo real e off-line. Nossas técnicas são robustas, rápidas, fáceis de implementar e atingem uma qualidade superior à de trabalhos anteriores.

Uma extensão prática da teoria de microfacetas para a modelagem de iridescência variável

Laurent Belcour (Unity), Pascal Barla (Inria) - ACM SIGGRAPH 2017

A iridescência da película fina permite reproduzir a aparência do couro. No entanto, essa teoria exige que os mecanismos de renderização espectral (como o Maxwell Render) integrem corretamente a mudança de aparência com relação ao ponto de vista (conhecido como goniocromatismo). Isso se deve ao aliasing no domínio espectral, pois os renderizadores em tempo real trabalham apenas com três componentes (RGB) para toda a faixa de luz visível. Neste trabalho, mostramos como suavizar o alias de um modelo de filme fino, como incorporá-lo à teoria de microfacetas e como integrá-lo a um mecanismo de renderização em tempo real. Isso amplia a gama de aparências reproduzíveis com modelos de microfacetas.

Sombreamento de luz linear com cossenos transformados linearmente

Eric Heitz, Stephen Hill (Lucasfilm) - GPU Zen (livro)

Neste capítulo do livro, estendemos nossa estrutura de luz de área baseada em cossenos transformados linearmente para dar suporte a luzes lineares (ou de linha). As luzes lineares são uma boa aproximação para luzes cilíndricas com um raio pequeno, mas diferente de zero. Descrevemos como aproximar essas luzes com luzes lineares que têm potência e sombreamento semelhantes e discutimos a validade dessa aproximação.

Uma introdução prática à análise de frequência do transporte de luz

Laurent Belcour - Cursos ACM SIGGRAPH 2016

A análise de frequência do transporte de luz expressa a renderização baseada em física (PBR) usando ferramentas de processamento de sinais. Assim, ele é adaptado para prever a taxa de amostragem, realizar a redução de ruído, realizar o anti-aliasing, etc. Muitos métodos foram propostos para lidar com casos específicos de transporte de luz (movimento, lentes, etc.). Este curso tem o objetivo de introduzir conceitos e apresentar cenários de aplicação prática da análise de frequência do transporte de luz em um contexto unificado. Para facilitar a compreensão dos elementos teóricos, a análise de frequência será apresentada em conjunto com uma implementação.

2016

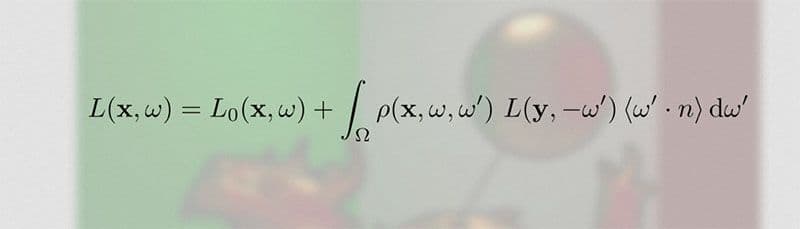

Sombreamento de luz poligonal em tempo real com cossenos transformados linearmente

Eric Heitz, Jonathan Dupuy, Stephen Hill (Ubisoft), David Neubelt (Ready at Dawn Studios) - ACM SIGGRAPH 2016

O sombreamento com luzes de área adiciona muito realismo às renderizações de CG. No entanto, ele requer a resolução de equações esféricas que o tornam desafiador para a renderização em tempo real. Neste projeto, desenvolvemos uma nova distribuição esférica que nos permite sombrear materiais de base física com luzes poligonais em tempo real.