A/B testing is an effective way to optimize and improve your apps in a wide variety of ways through experimentation and carefully analyzing data.

What is A/B testing

The idea behind A/B testing is to compare and contrast similar variants of users but providing different optimizations on each variant to determine which changes to make going forward. Ideally, A/B testing is used to compare two or more versions of an experiment to see which one performs better.

When comparing the two variants, they are often referred to as the A variant and B variant. Users are randomly assigned to each variant to prevent any types of biases that could skew the data.

This can include pre-test biases based on variables that can affect your test results such as a social media campaign awarding users currency to try your game right before you start your experiment. This cohort of users can affect your test and provide skewed results.

Why you should be A/B testing

A/B testing allows you to make educated decisions based on data rather than a hunch. Being able to provide the evidence with data is essential to confidently making the changes needed to best optimize your app.

Depending on your test size, you are able to test more than just an A and B variant. Tests can be adjusted to include more variants. However, breaking up the audience into more variants requires you to extend the duration of the test to achieve statistical significance or else you risk the data being diluted by a lack of samples.

How to conduct A/B testing

The most common causes to utilize A/B testing are:

- Maximizing specific player behavior (spending habits, playing habits, retention, etc.)

- Testing new and existing features to optimize performance and adoption rates for the users

- Improving specific user flows (FTUE, store user flow, level progression, reward pacing, etc.)

Defining your goals for each A/B test is important in order to utilize your data and time properly. Make sure the business goal for each experiment is clear so that you can measure KPIs that provide valuable data to push initiatives to optimize your app.

One example of an in-app A/B test would be testing the starting currency balance of a new player. Your experiment could be something similar to:

| Audience: | New users |

| Variant A (enabled): | 100 gold |

| Variant B (control): | 0 gold |

| KPIs to measure: | Retention rate (D1, D3, D7, D30), ARPDAU, and conversion rate |

Importance of control variants

A control variant is a subset of users who match the audience criteria of the test but are left unaffected by the treatment. The importance of this group is to ensure your team can clearly see any lifts or drops measured using the A and B variants. The KPIs set before the test will help determine these changes.

It’s important to note that by comparing a test group’s change over time against the control variant’s metric, we can isolate impacts on KPIs that are caused by outside factors which can impact your results.

Conclusion

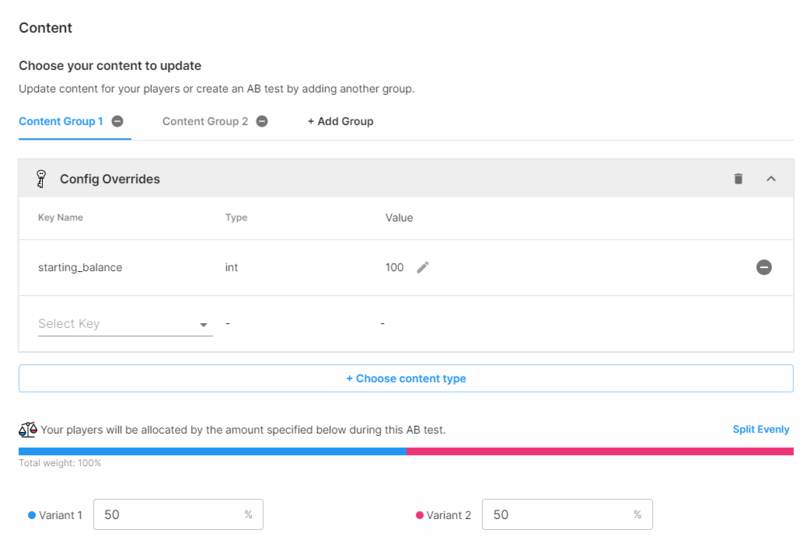

Unity Gaming Services has the ability to create A/B test campaigns using our Game Overrides system. You can view our step-by-step guide here. Be sure to check out this page as we’ll be adding more tips in the coming months.

After running this test we can analyze the data to see how each of the behaviors of these variants are affected by the varying starting balance and their impact on the KPIs we wish to measure.

Based on our example above, we want to see if variant A had positive (or negative) impacts on our KPIs. Some questions you can ask yourself when reviewing the results are:

- Does variant A provide higher retention rate vs. the control due to users having more currency to spend to help progress in the game?

- Does providing users with a higher starting balance incentivised to spend more money?

- Are users being converted to spenders at a higher rate when they have a higher starting balance?

Asking these questions and understanding the impact the treatment has on the users is essential to understanding the behavior of these players and how you can optimize the experience of these users.

How to determine statistical significance

Statistical significance is the level of confidence that an A/B test is providing accurate data and is not influenced by outside factors. The first step to calculating statistical significance is coming up with your null and alternative hypotheses.

- Null hypothesis (H0): A statement that the change had no effect on the sample group and is assumed to be true.

- Alternative hypothesis (Ha): A prediction your treatment will have on the given sample.

Once you have your hypotheses chosen, you can then choose your significance level (α) which is the probability of rejecting the null hypothesis. The standard significance level that should be aimed for is 0.05, which means that your results have a less than 5% probability that the null hypothesis is true.

The next step would be to find your probability value (p-value) which determines the probability that your data occurs within the null hypothesis. The lower the p-value, the more statistically significant your results will be.

If your p-value is greater than the significance level, then the probability is too high to reject the null hypothesis and thus, your results are not statistically significant.

If your p-level is lower than the significance level, then there is enough evidence to reject the null and accept the alternative hypothesis, meaning our results are statistically significant.

An A/B test that has statistical significance indicates that our experiment was successful and you can confidently make changes based on our test to optimize our app.

Examples of A/B tests in games

A very common A/B testing experiment to perform for early in games life is testing different first time user experiences (FTUEs) in order to increase early retention among players (D1, D3, D7). A game’s FTUE is important to onboard users and to get them interested in your app.

| Audience: | New Users |

| Variant A (variant): | Normal FTUE (10 steps) |

| Variant B (control): | Short FTUE (5 steps) |

| KPIs to measure: | Retention rate (D1, D3, D7) |

Many live service games and apps have in-app purchases (IAPs) available for users to help distribute content and revenue for the developer. One common example is testing different price points for an IAP bundle such as an item bundle ($5 bundle vs. $20 bundle). Alternatively, you can have the same price point but different contents within the bundle.

| Audience: | Spender |

| Variant A (enabled): | $5 bundle |

| Variant B (control): | $20 bundle |

| KPIs to measure: | ARPDAU (average revenue per daily average user), LTV (long-term value) |

Do’s and don’ts of A/B testing

DO:

Always have an A/B test running. You should always have at least one A/B test running at all times so that you don’t waste any time and find new ways to optimize your app.

Perform tests on various metrics. When experimenting, always make sure to test different variables that you can optimize while using separate A/B tests for each one. This can range from difficulty, ad rewards, push notification timing, and others.

Make sure that your variable groups have similar sample sizes. If your sample sizes between groups differ too much, then you will have inaccurate results. Any treatments run on those samples may not be adequate.

DON'T:

Test too many variables at the same time. Performing too many A/B tests at the same time will muddy your results since different tests can directly influence each other.

Run your tests too short. A common mistake is stopping a test too early where the data isn’t sufficient and can be affected by a wide variety of factors. An in-game event that runs in the middle of your experiment can greatly influence your results, leading to low statistical significance and having less reliable data.

Be afraid to get more granular with your experiments. Narrowing down your target audience to a more refined level can be very effective as long as you have a well thought out hypothesis and have a sample size large enough to provide accurate results.